SDP VPN

Although organizations realize the need to upgrade their approach to user access control, deploying existing technologies is holding back the introduction of Software Defined Perimeter (SDP). A recent Cloud Security Alliance (CSA) report on the “State of Software-Defined Perimeter” states that existing in-place security technologies are the main barrier to adopting SDP. One can understand the reluctance to leap. After all, VPNs have been a cornerstone of secure networking for over two decades.

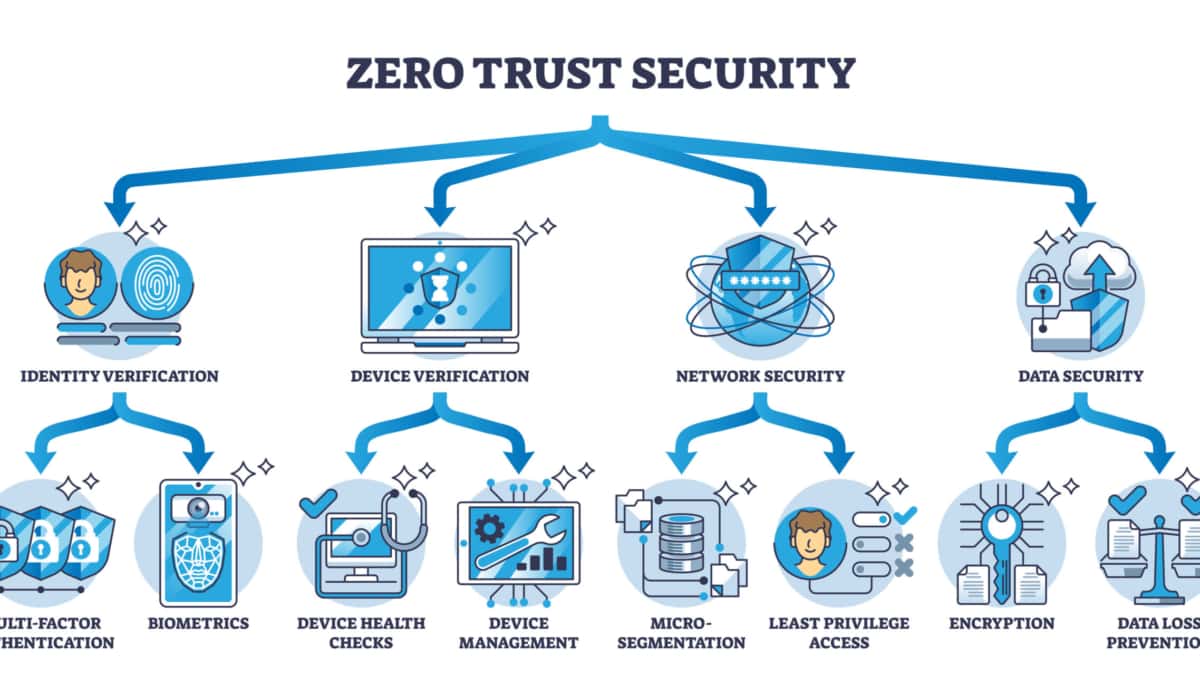

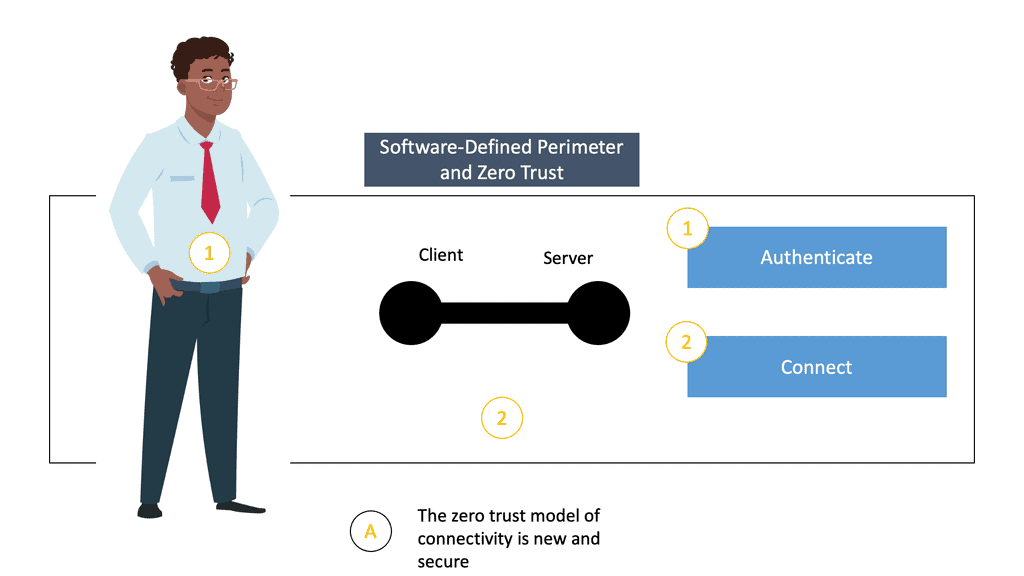

They do provide what they say; secure remote access. However, they have not evolved to secure our developing environment appropriately. The digital environment has changed considerably in recent times. A big push for the cloud, BYOD, and remote workers puts pressure on existing VPN architectures. As our environment evolves, the existing security tools and architectures must evolve, also to an era of SDP VPN. And to include other zero trust features such as Remote Brower Isolation.

Undoubtedly, there is a common understanding of the benefits of adopting the zero-trust principles that software-defined perimeter provides over traditional VPNs. But the truth that organizations want even safer, less disruptive, and less costly deployment models cannot be ignored. VPNs aren’t a solution that works for every situation. It is not enough to offer solutions that involve ripping the existing architectures completely or even putting software-defined perimeter on certain use cases. The barrier to adopting a software-defined perimeter involves finding a middle ground.

Before you proceed, you may find the following posts helpful:

SDP VPN |

|

SDP VPN: Safe-T; Providing the middle ground.

Safe-T is aware of this need for a middle ground. Therefore, in addition to the standard software-defined perimeter offering, Safe-T offers this middle-ground to help the customer on the “journey from VPN to SDP,” resulting in a safe path to SDP VPN.

Now organizations do not need to rip and replace the VPN. Sofware-defined perimeter and VPNs ( SDP VPN ) can work together, yielding a more robust security infrastructure. Having network security that can bounce you between IP address locations can make it very difficult for hackers to break in. Besides, if you already have a VPN solution you are comfortable with, you can continue using it and pair it with Safe-T’s innovative software-defined perimeter approach. By adopting this new technology, you get equipped with a middle-ground that improves your security posture and maintains the advantages of existing VPNs.

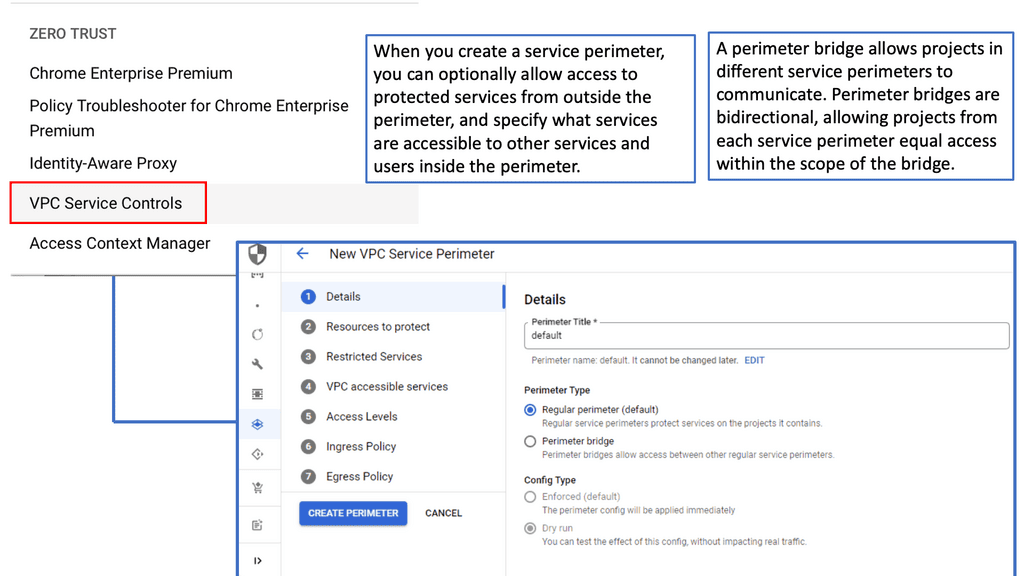

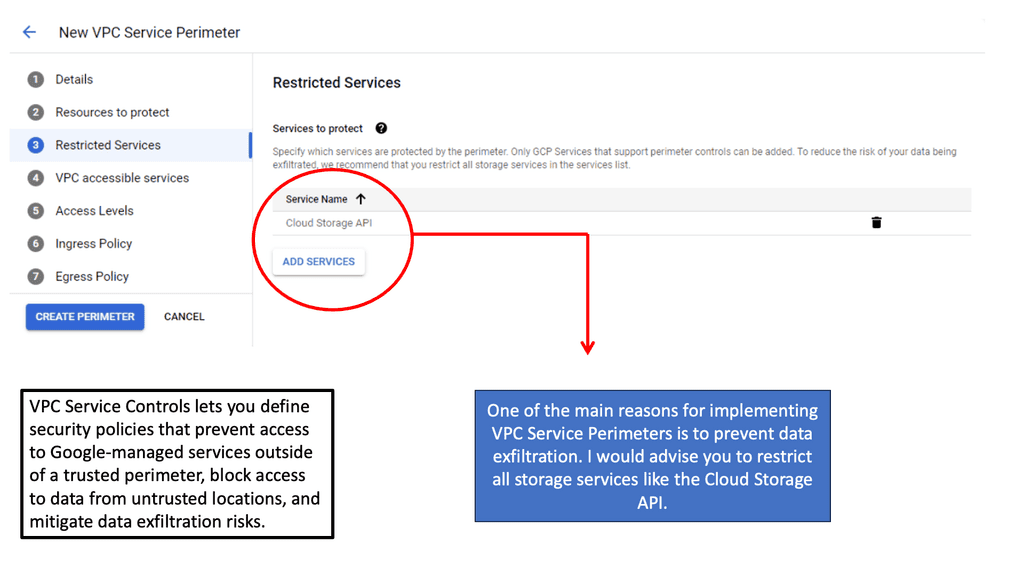

Recently, Safe-T has released a new SDP solution called ZoneZero that enhances VPN security by adding SDP capabilities. Adding SDP capabilities allows exposure and access to applications and services. The access is granted only after assessing the trust, based on policies for an authorized user, location, and application. In addition, access is granted to the specific application or service rather than the network, as you would provide with a VPN.

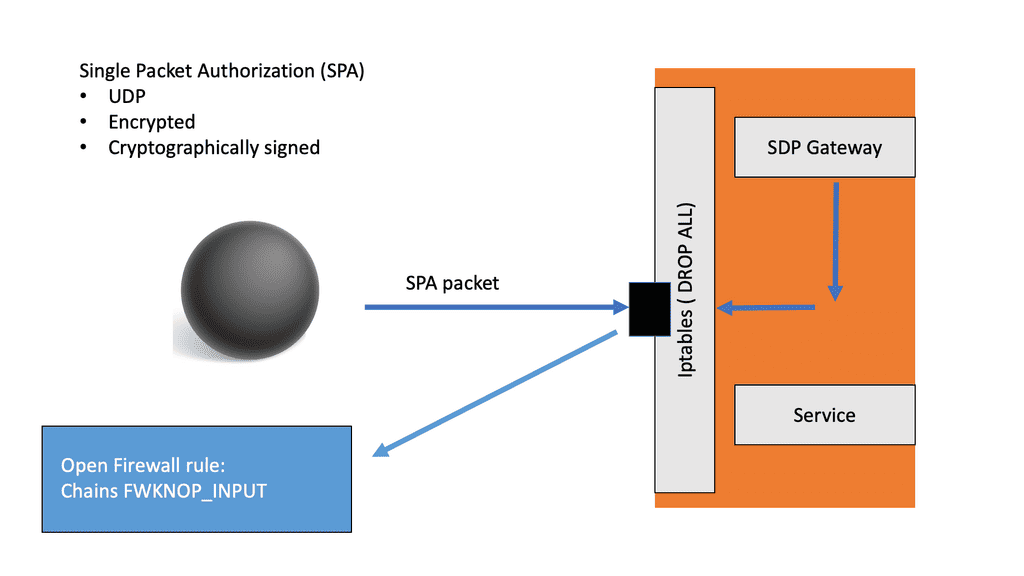

Deploying SDP and single packet authorization on the existing VPN offers a customized and scalable zero-trust solution. It provides all the benefits of SDP while lowering the risks involved in adopting the new technology. Currently, Safe-T’s ZoneZero is the only SDP VPN solution in the market, focusing on enhancing VPN security by adding zero trust capabilities rather than replacing it.

The challenges of just using a traditional VPN

VPNOverview

While VPNs have stood the test of time, today, we know that the proper security architecture is based on zero trust access. VPNs operating by themselves are unable to offer optimum security. Now, let’s examine some of the expected shortfalls.

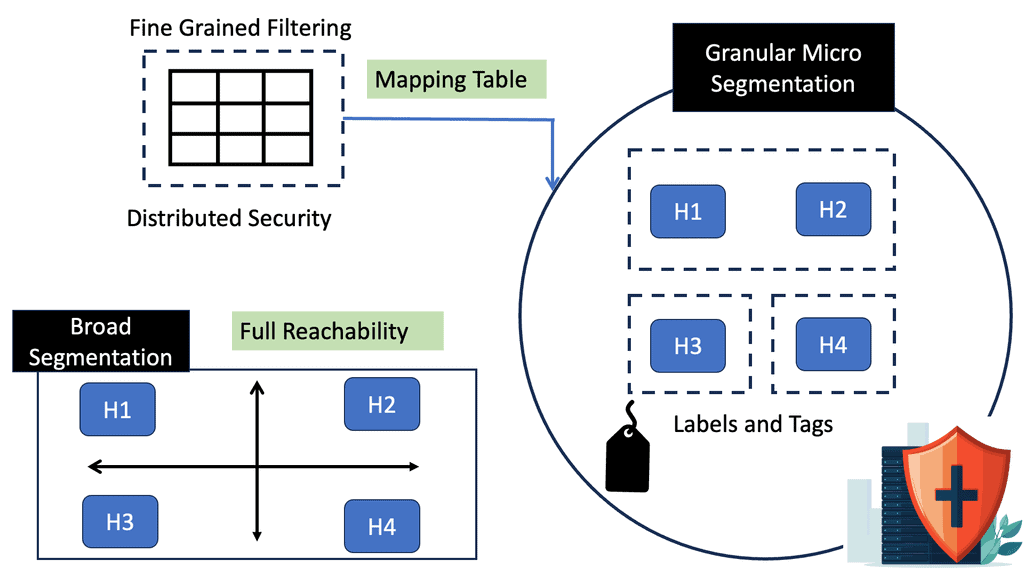

The VPN lacks because they cannot grant access on a granular, case-by-case level. This is a significant problem that SDP addresses. According to the traditional security setup, you had to connect a user to a network to get access to an application. Whereas, for the users not on the network, for example, remote workers, we needed to create a virtual network to place the user on the same network as the application.

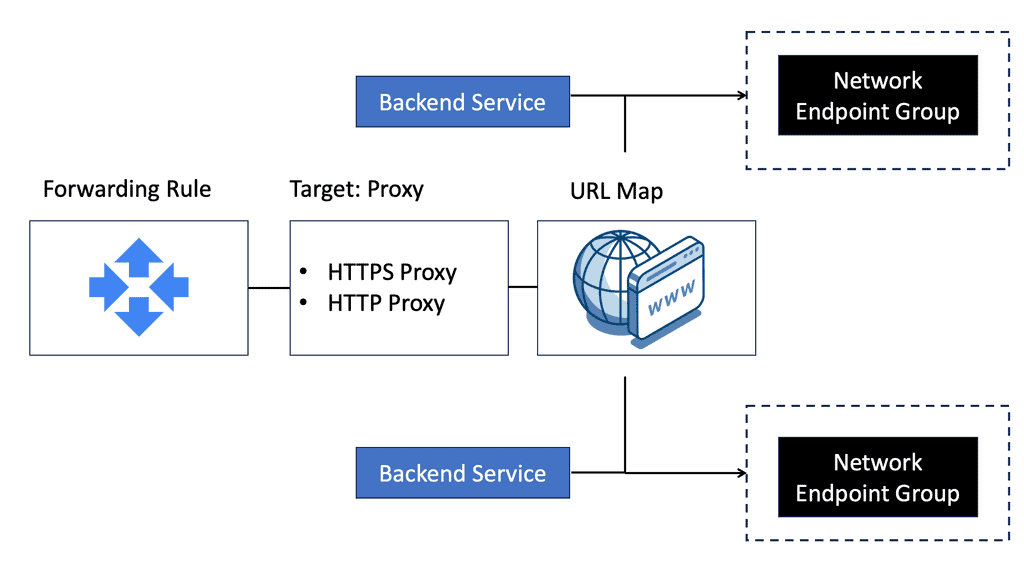

To enable external access, organizations started to implement remote access solutions (RAS) to restrict user access and create secure connectivity. An inbound port is exposed to the public internet to provide application access. However, this open port is visible to anyone online, not just remote workers.

From a security standpoint, the idea of network connectivity to access an application will likely bring many challenges. We then moved to the initial layer of zero trust to isolate different layers of security within the network. This provided a way to quarantine the applications not meant to be seen as dark. But this leads to a sprawl of network and security devices.

For example, you could use inspection path control with a hardware stack. This enabled the users to only access what they could, based on the blacklist security approach. Security policies provided broad-level and overly permissive access. The attack surface was too wide. Also, the VPN displays static configurations that have no meaning. For example, a configuration may state that this particular source can reach this destination using this port number and policy.

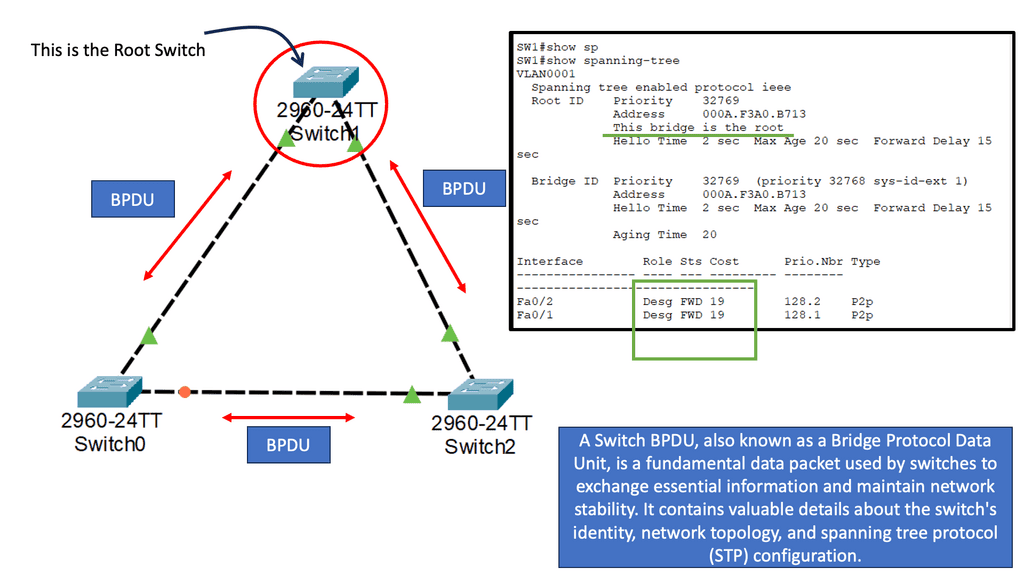

However, with this configuration, the contextual configuration is not considered. There are just ports and IP addresses, and the configuration offers no visibility into the network to see who, what, when, and how they connect with the device.

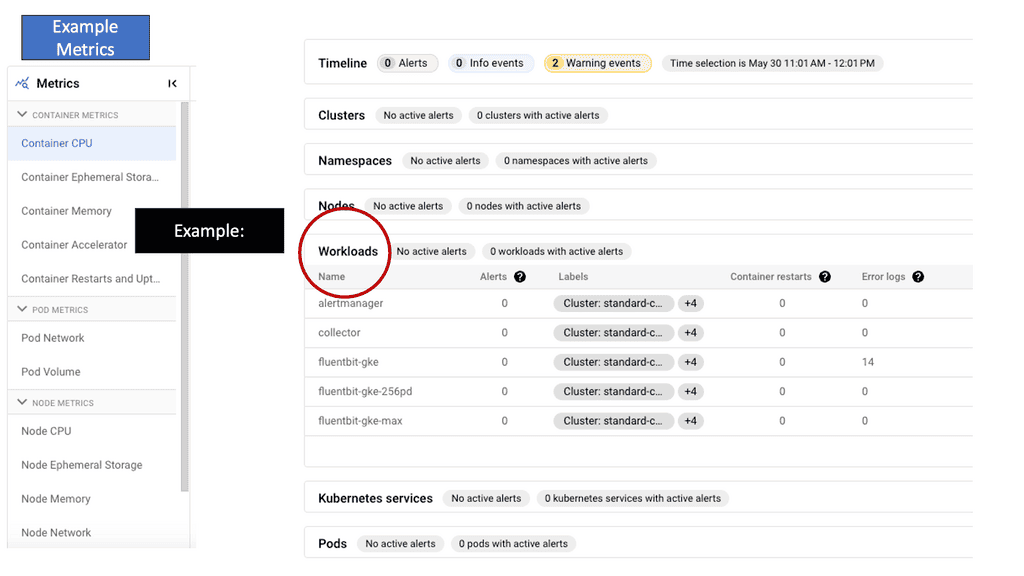

More often than, access policy models are coarse-grained, which provides users with more access than is required. This model does not follow the least privilege model. The VPN device provides only the network information, and the static policy does not dynamically change based on the levels of trust.

For example, the user’s anti-virus software is accidentally turned off or by malicious malware. Or maybe you want to re-authenticate when certain user actions are performed. In such cases, a static policy cannot dynamically detect this and change configuration on the fly. They should be able to express and enforce the policy configuration based on the identity, which considers both the user and the device.

SDN VPN

The new technology adoption rate can be slow initially. The primary reason could be the lack of understanding that what you have in place today is not the best for your organization in the future. Maybe now is the time to stand back and ask if this is the future that we want.

All the money and time you have spent on the existing technologies are not evolving at pace with today’s digital environment. This indicates the necessity for new capabilities to be added. These get translated into different meanings based on an organization’s CIO and CTO roles. The CTOs are passionate about embracing new technologies and investing in the future. They are always looking to take advantage of new and exciting technological opportunities. However, the CIO looks at things differently. Usually, the CIO wants to stay with the known and is reluctant to change even in case of loss of service. Their sole aim is to keep the lights on.

This shines the torch on the need to find the middle ground. And that middle-ground is to adopt a new technology that has endless benefits for your organization. The technology should be able to satisfy the CTO group while also taking every single precaution and not disrupting the day-to-day operations.

- The push by the marketers

There is a clash between what is needed and what the market is pushing. The SDP industry standard encourages customers to rip and replace their VPN to deploy their Software Defined Perimeter Solutions. But the customers have invested in a comprehensive VPN and are reluctant to replace it.

The SDP market initially pushed for a rip-and-replace model, which would eliminate the use of traditional security tools and technologies. This should not be the recommended case since the SDP functionality can overlap with the VPNs. Although the existing VPN solutions have their drawbacks, there should be an option to use the SDP in parallel. Thereby offering the best of both worlds.

Software-defined perimeter: How does Safe-T address this?

Safe-T understands there is a need to go down the SDP VPN path, but you may be reluctant to do a full or partial VPN replacement. So let’s take your existing VPN architecture and add the SDP capability.

The solution is placed after your VPN. The existing VPN communicates with Safe-T ZoneZero, which will do the SDP functions after your VPN device. From an end user’s perspective, they will continue to use their existing VPN client. In both cases, the users operate as usual. There are no behavior changes, and the users can continue using their VPN client.

For example, they authenticate with the existing VPN as before. But the VPN communicates with SDP for the actual authentication process instead of communicating with, for example, the Active Directory (AD).

What do you get from this? From an end user’s perspective, their day-to-day process does not change. Also, instead of placing the users on your network as you would with a VPN, they are switched to application-based access. Even though they use a traditional VPN to connect, they are still getting the full benefits of SDP.

This is a perfect stepping stone on the path toward SDP. Significantly, it provides a solid bridge to an SDP deployment. It will lower the risk and cost of the new technology adoption with minimal infrastructure changes. It removes the pain caused by deployment.

The ZoneZero™ deployment models

Safe-T offers two deployment models; ZoneZero Single-Node and Dual-Node.

With the single-node deployment, a ZoneZero virtual machine is located between the external firewall/VPN and the internal firewall. All VPN is routed to the ZoneZero virtual machine, which controls which traffic continues to flow into the organization.

In the dual-node deployment model, the ZoneZero virtual machine is between the external firewall/VPN and the internal firewall. And an access controller is in one of the LAN segments behind the internal firewall.

In both cases, the user opens the IPSEC or SSL VPN client and enters the credentials. The credentials are then retrieved by the existing VPN device and passed over RADIUS or API to ZoneZero for authentication.

SDP is charting the course to a new network and security architecture. But now, a middle ground can reduce the risks associated with the deployment. The only viable option is running the existing VPN architectures parallel with SDP. This way, you get all the benefits of SDP with minimal disruption.