Merchant Silicon

In the ever-evolving landscape of technology, innovation continues to shape how we live, work, and connect. One such groundbreaking development that has caught the attention of experts and enthusiasts alike is merchant silicon. In this blog post, we will explore merchant silicon's remarkable capabilities and its far-reaching impact across various industries.

Merchant silicon refers to off-the-shelf silicon chips designed and manufactured by third-party companies. These versatile chips can be used in various applications and offer cost-effective solutions for businesses.

Flexibility and Customizability: Merchant Silicon provides network equipment manufacturers with the flexibility to choose from a wide range of components and features, tailoring their solutions to meet specific customer needs. This flexibility enables faster time-to-market and promotes innovation in the networking industry.

Cost-Effectiveness: By leveraging off-the-shelf components, Merchant Silicon significantly reduces the cost of developing networking equipment. This cost advantage makes high-performance networking solutions more accessible, driving competition and fostering technological advancements.

Enhanced Network Performance and Scalability: Merchant Silicon is designed to deliver high-performance networking capabilities, offering increased bandwidth and throughput. This enables faster data transfer rates, reduced latency, and improved overall network performance.

Advanced Packet Processing: Merchant Silicon chips incorporate advanced packet processing technologies, such as deep packet inspection and traffic prioritization. These features enhance network efficiency, allowing for more intelligent routing and improved Quality of Service (QoS).

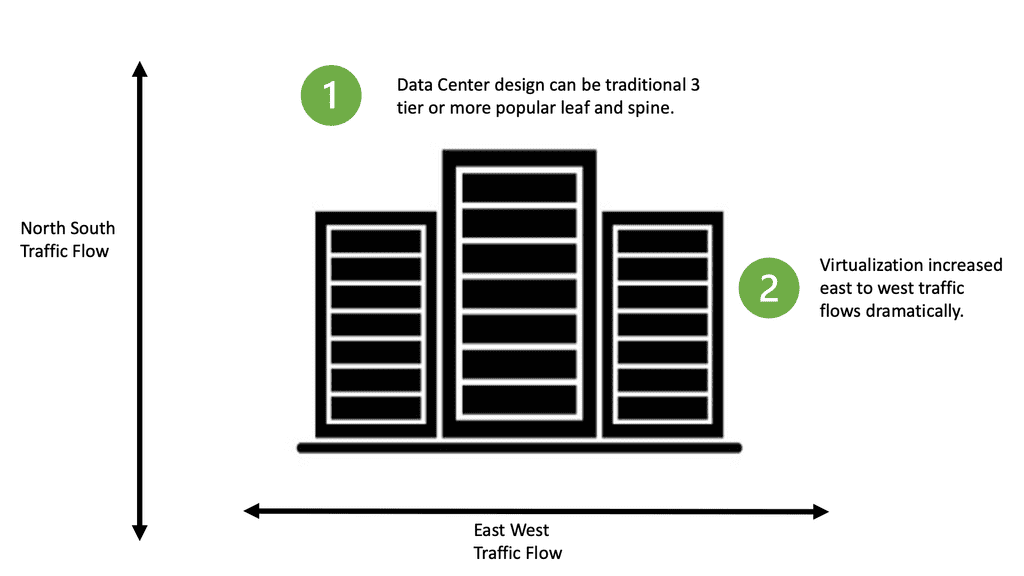

Data Centers: Merchant Silicon has found extensive use in data centers, where scalability, performance, and cost-effectiveness are paramount. By leveraging the power of Merchant Silicon, data centers can handle the ever-increasing demands of modern applications and services, ensuring seamless connectivity and efficient data processing.

Enterprise Networking: In enterprise networking, Merchant Silicon enables organizations to build robust and scalable networks. From small businesses to large enterprises, the flexibility and cost-effectiveness of Merchant Silicon empower organizations to meet their networking requirements without compromising on performance or security.

Merchant Silicon has emerged as a game-changer in the world of network infrastructure. Its flexibility, cost-effectiveness, and enhanced performance make it an attractive choice for network equipment manufacturers and organizations alike. As technology continues to advance, we can expect Merchant Silicon to play an even more significant role in shaping the future of networking.Matt Conran

Highlights: Merchant Silicon

Understanding Merchant Silicon

– Silicon chips, specifically designed for networking devices, play a pivotal role in functioning routers, switches, and other network equipment. One type of silicon that has gained significant attention and relevance in recent years is Merchant Silicon.

– Merchant Silicon refers to off-the-shelf networking chips produced by third-party vendors. Unlike custom silicon solutions developed in-house by network equipment manufacturers, Merchant Silicon offers a standardized, cost-effective alternative. These chips are designed to meet the requirements of various networking applications and are readily available for integration into networking devices.

– Unlike traditional networking solutions that rely on proprietary chips, merchant silicon allows network equipment manufacturers to leverage readily available chipsets from third-party vendors. This opens up a world of possibilities, empowering companies to design and develop networking solutions that are highly customizable and scalable.

Silicon: Industry Impact

The emergence of merchant silicon has had a profound impact on the networking industry. It has disrupted the traditional model of vertically-integrated networking vendors and opened up opportunities for new players to enter the market. With the ability to leverage merchant silicon, smaller companies can now compete with established networking giants, fostering innovation and driving competition.

## Enhancing Network Infrastructure

One of the most significant applications of merchant silicon is in the enhancement of network infrastructure. With the rise of cloud computing and IoT devices, the demand for high-speed, reliable networks has never been greater. Merchant silicon enables the development of robust routers, switches, and other networking devices that can handle vast amounts of data with minimal latency. This capability is crucial for supporting modern digital ecosystems, where speed and reliability are paramount.

## Driving Innovation in Data Centers

Data centers are the backbone of the digital age, and merchant silicon plays a critical role in their operation. By providing the necessary hardware to manage and route data efficiently, merchant silicon helps data centers achieve higher performance levels while maintaining energy efficiency. This, in turn, supports the seamless operation of services like streaming, online gaming, and real-time data analytics, which require exceptional processing power and speed.

## Boosting Telecommunications

The telecommunications industry is also reaping the benefits of merchant silicon. As the world becomes more connected, telecom providers must ensure their networks can support increased data traffic and provide high-quality service to users. Merchant silicon allows for the development of advanced telecom equipment that can scale with rising demand, ensuring that communication remains fluid and uninterrupted.

Let’s begin by defining our terms:

- Custom silicon

The term custom silicon describes chips, usually ASICs (Application Specific Integrated Circuits), that are custom designed and typically built by the switch company that sells them. When describing such chips, I might use the term in-house. Cisco Nexus 7000 switches, for instance, use proprietary ASICs designed by Cisco.

- Merchant silicon

The term merchant silicon describes chips, usually ASICs, designed and made by a company other than the one that sells the switches they are used in. Suppose I could buy these chips from a retail store if such switches use off-the-shelf ASICs. I’ve looked, and Wal-Mart doesn’t carry them. Broadcom’s Trident+ ASIC, for example, is used in Arista’s 7050S-64 switches.

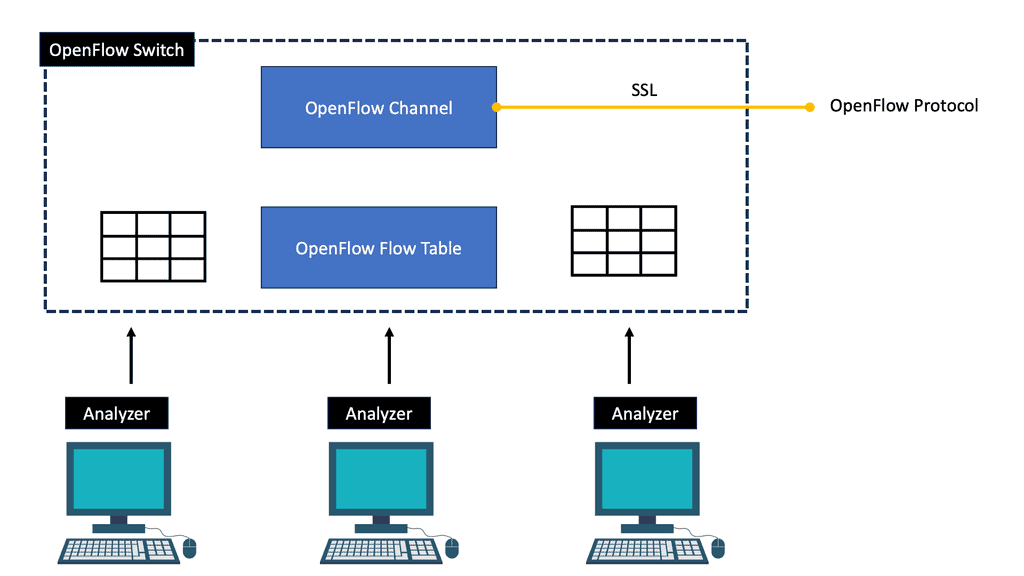

Merchant Silicon and SDN

Another potential benefit of merchant silicon is the future of software-defined networks (SDN). SDN resembles a cluster of switches controlled by a single software brain that runs outside the physical switches. As a result, switches become little more than ASICs that receive instructions from a master controller. A commoditized operating system and hardware would make it easier to add any vendor’s switch to the master controller in such a situation.

A silicon-based switch based on merchant silicon lends itself to this design paradigm. In contrast, a silicon-based switch based on a custom silicon design would likely only support that switch’s vendor’s master controller.

The combination of Merchant Silicon and SDN creates a powerful synergy that enhances the capabilities of modern networks. Merchant Silicon provides the robust, scalable hardware foundation, while SDN adds a layer of intelligence and adaptability.

This partnership allows for the creation of networks that are not only cost-effective but also highly customizable and responsive to business needs. Organizations can now design networks that scale effortlessly, adapt to changing conditions, and optimize performance without the burdensome costs associated with proprietary solutions.

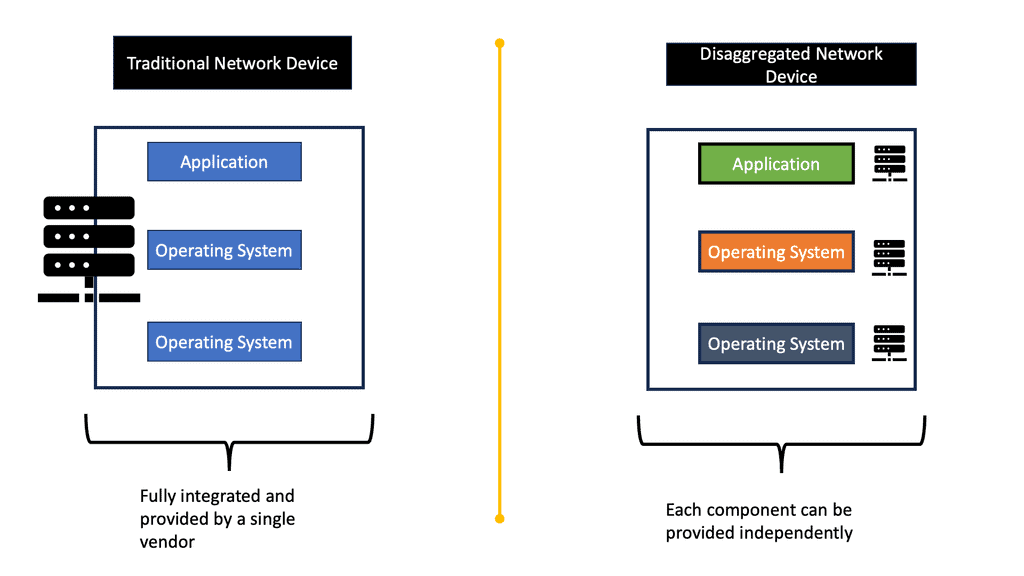

Bare-Metal Switching

Commodity switches are used in both white-box and bare-metal switching. In this way, users can purchase hardware from one vendor, purchase an operating system from another, and then load features and applications from other vendors or open-source communities.

As a result of the OpenFlow hype, white-box switching was a hot topic since it commoditized hardware and centralized the network control in an OpenFlow controller (now known as an SDN controller). Google announced in 2013 that it built and controlled its switches with OpenFlow! It was a topic of much discussion then, but not every user is Google, so not every user will build their hardware and software.

Meanwhile, a few companies emerged solely focused on providing white-box switching solutions. These companies include Big Switch Networks, Cumulus Networks, and Pica8 (now owned by NVIDIA). They also needed hardware for their software to provide an end-to-end solution.

Originally, original design manufacturers (ODMs) supplied white-box hardware platforms like Quanta Networks, Supermicro, Alpha Networks, and Accton Technology Corporation. You probably haven’t heard of those vendors, even if you’ve worked in the network industry.

The industry shifted from calling this trend white-box to bare-metal only after Cumulus and Big Switch announced partnerships with HP and Dell Technologies. Name-brand vendors now support third-party operating systems from Big Switch and Cumulus on their hardware platforms.

You create bare-metal switches by combining switches from ODMs with NOSs from third parties, including the ones mentioned above. Many of the same switches from ODMs are now also available from traditional network vendors, as they use merchant silicon ASICs.

### What is Bare-Metal Switching?

Bare-metal switching refers to the use of network switches that are decoupled from proprietary software, allowing users to install their choice of network operating systems (NOS). This separation of hardware and software provides an unprecedented level of customization and control over network operations, enabling businesses to tailor their network to specific needs and optimize performance. By leveraging open standards and commoditized hardware, bare-metal switches can significantly reduce costs and increase the agility of network infrastructure.

### Benefits of Bare-Metal Switching

One of the primary advantages of bare-metal switching is its cost-effectiveness. By breaking free from vendor lock-in, organizations can select the best hardware and software combination for their needs, often at a fraction of the cost of traditional solutions. Additionally, bare-metal switches offer enhanced flexibility and scalability, allowing networks to adapt quickly to changing demands. This is particularly beneficial for cloud service providers and large data centers that require robust, scalable infrastructure.

### Challenges and Considerations

While bare-metal switching offers numerous benefits, it also presents some challenges that organizations must consider. Implementing a bare-metal switch requires a higher level of technical expertise, as IT teams must manage both hardware and software independently. Furthermore, ensuring compatibility between different NOS and hardware can be complex. Organizations need to carefully evaluate their technical capabilities and resources before transitioning to a bare-metal architecture.

Landscape Changes

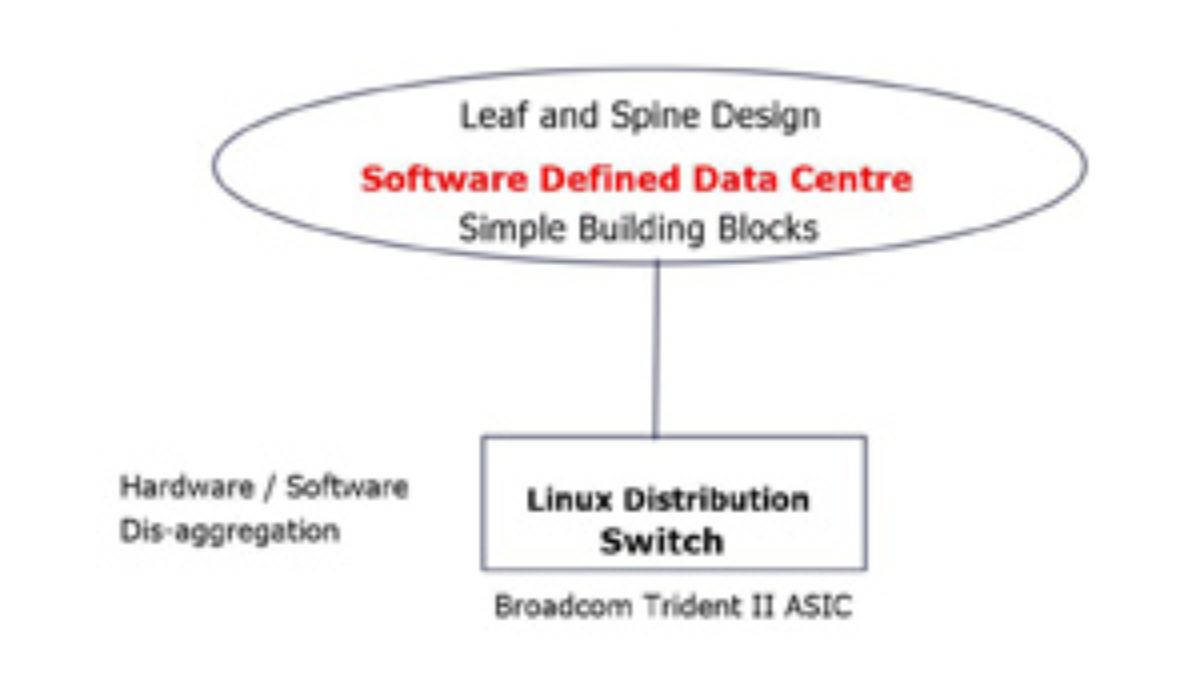

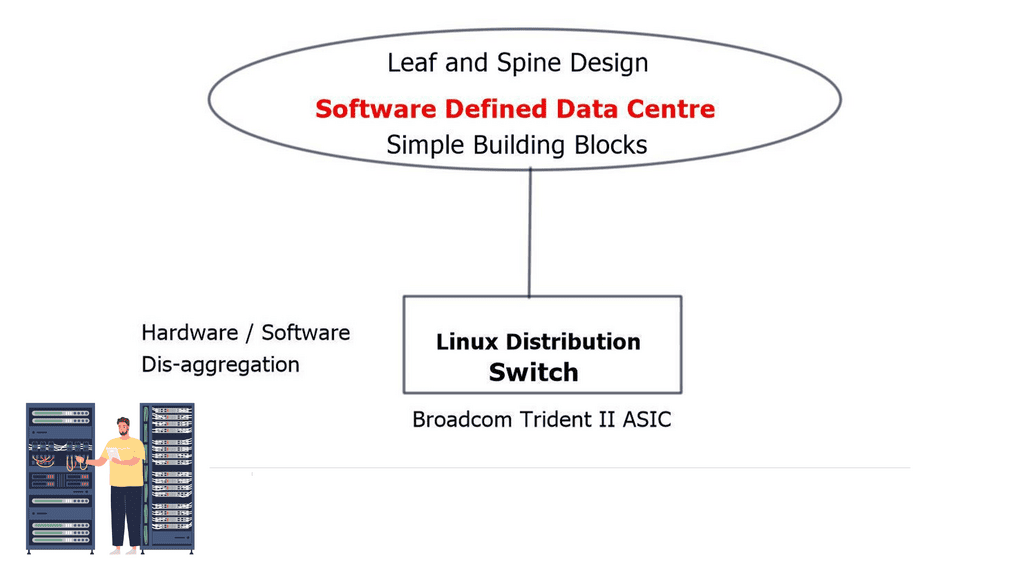

Some data center vendors offer a “Debian” based operating system for network equipment. Their philosophy is that engineers should manage switches just like they manage servers with the ability to use existing server administration tools. They want networking to work as a server application. For example, Cumulus has created the first full-featured Linux distribution for network hardware. It allows designers to break free from proprietary networking equipment and utilize the advantages of the SDN Data Center.

**Issues with Traditional Networking**

Cloud computing, distributed storage, and virtualization technologies are changing the operational landscape. Traditional networking concepts do not align with new requirements and continually act as blockers to business enablers. Decoupling hardware/software is required to keep pace with the innovation needed to meet the speeds and agility of cloud deployments and emerging technologies.

Merchant silicon is a term used to describe chips. Usually, ASICs (Application-Specific Integrated Circuits) are developed by an entity, not the company selling the switches. Then, we have custom silicon, which is the opposite of Merchant Silicon. Custom silicon is a term used to describe chips, usually ASICs, that are custom-designed and traditionally built by the company selling the switches in which they are used.

Before you proceed, you may find the following helpful:

Merchant Silicon

Disaggregation Model

Disaggregation is the next logical evolution in data center topologies. Cumulus does not reinvent all the wheels; they believe that routing and bridging work well, with no reason to change them. Instead, they use existing protocols to build on the original networking concept base. The technologies they offer are based on well-designed current feature sets. Their O/S enables dis-aggregation of switching design to the server hardware/software disaggregation model.

Disaggregation decouples hardware/software on individual network elements. Modern networking equipment is proprietary today, making it expensive and complicated to manage. Disaggregation allows designers to break free from vertically integrated networking gear. It also allows you to separate the procurement decisions around hardware and software.

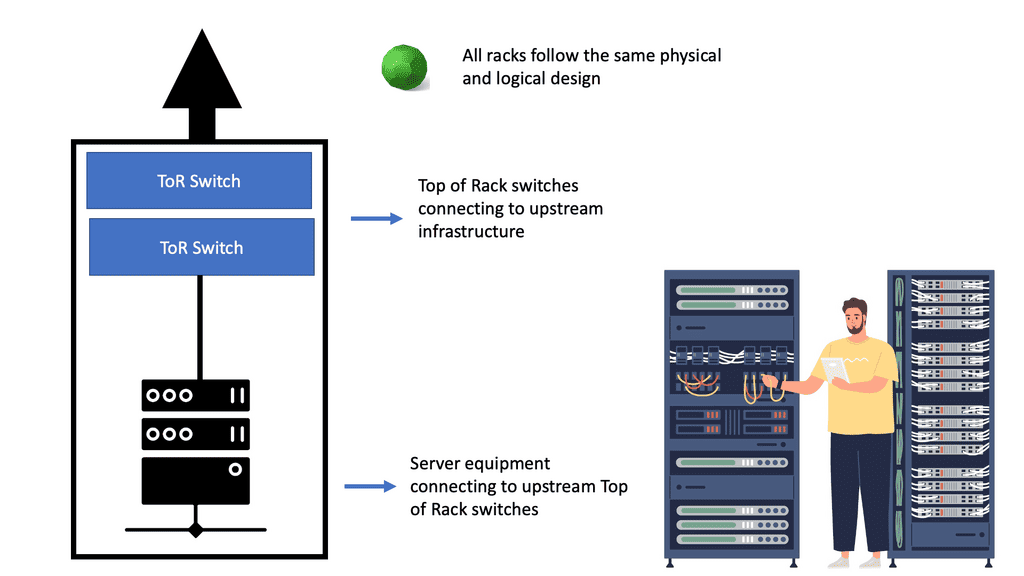

Data center topology types and merchant silicon

Previously, we needed proprietary hardware to provide networking functionality. Now, the hardware allows many of those functions in “merchant silicon.” In the last ten years, we have seen a massive increase in the production of merchant silicon. Merchant silicon is a term used to describe the use of “off-the-shelf” chip components to create a network product enabling open networking. Currently, three major players for 10GbE and 40GbE switch ASIC are Broadcom, Fulcrum, and Fujitsu.

In addition, cumulus supports the Broadcom Trident II ASIC switch silicon, also used in the Cisco Nexus 9000 series. Merchant silicon’s price/performance ratio is far better than proprietary ASIC.

Routing isn’t broken – Simple building blocks.

To disaggregate networking, we must first simplify it. Networking is complicated. Sometimes, less is more. Building robust ecosystems using simple building blocks with existing layer 2 and layer 3 protocols is possible. Internet Protocol (IP) is the underlying base technology and the basis for every large data center. MPLS is an attractive, helpful alternative, but IP is a mature building block today. IP is based on a standard technique, unlike Multichassis Link Aggregation (MLAG), which is vendor-specific.

Multichassis Link Aggregation (MLAG) implementation

Each vendor has various MLAG variations; some operate with unified and separate control planes. MLAG offers suitable control planes: Juniper with Virtual Chassis, HP with Intelligent Resilient Framework (IRF), Cisco Virtual Switching System, and cross-stack EtherChannel. MLAG, with separate control planes, includes Cisco Virtual Port-Channel (vPC) and Arista MLAG.

With all the vendors out there, we have no standard for MLAG. Where specific VLANs can be isolated to particular ToRs, Layer 3 is a preferred alternative. Cumulus Multichassis Link Aggregation (MLAG) implementation is an MLAG daemon written in python.

The specific implementation of how the MLAG gets translated to the hardware is ASIC independent, so in theory, you could run MLAG between two boxes that are not running the same chipset. Similar to other vendor MLAG implementations, it is limited to two spine switches. If you require anything to scale, move to IP. The beauty of IP is that you can do much stuff without relying on proprietary technologies.

Data center topology types: A design for simple failures

Everyone building networks at scale is building them as a loosely coupled system. People are not trying to over-engineer and build exact systems. High-performance clusters are excellent applications and must be made a certain way. A general-purpose cloud is not built that way. Operators build “generic” applications over “generic” infrastructure. Designing and engineering networks with simple building blocks leads to simpler designs with simple failures. Over-engineering networks experience complex failures that are time-consuming to troubleshoot. When things fail, they should fail.

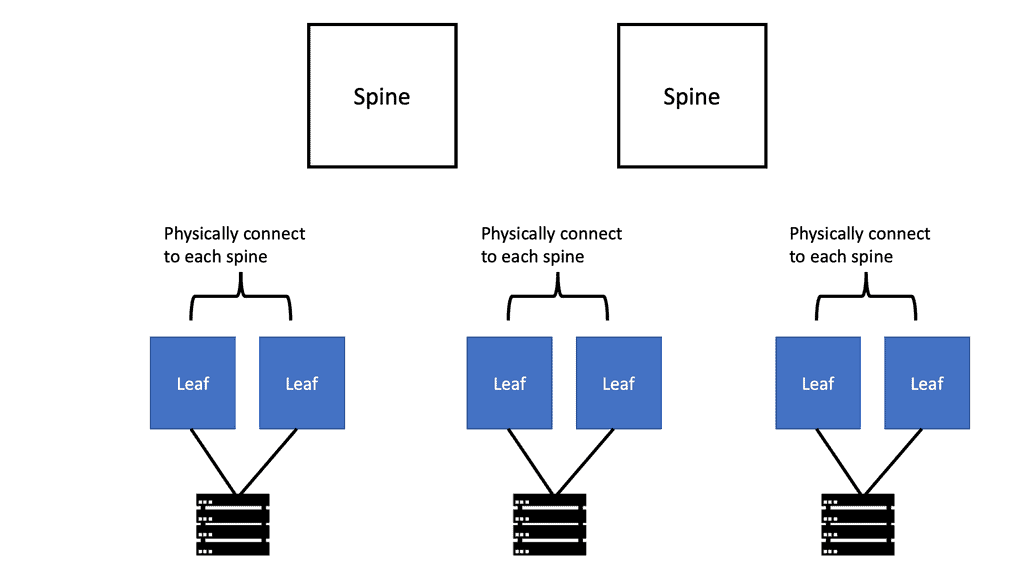

Building blocks should be constructed with straightforward rules. Designers understand you can build extensive networks with simple rules and building blocks. For example, analyzing Spine Leaf architecture looks complicated. But in terms of the networking fabric, the Cumulus ecosystem is made of a straightforward building block – fixed form-factor switches. It makes failures very simple.

On the other hand, if the chassis base switch fails, you need to troubleshoot many aspects. Did the line card not connect to the backplane? Is the backplane failing? All these troubleshooting steps add complexity. With the disaggregated model, when networks fail, they fail in simple ways. Nobody wants to troubleshoot a network when it is down. Cumulus tries to keep the base infrastructure simple and not complement every tool and technology.

For example, if you use Layer 2, MLAG is your only topology. STP is simply a fail-stop mechanism and is not used as a high convergence mechanism. Rapid Spanning Tree Protocol (RSTP) and Bridge Protocol Data Units (BPDU) are all you need; you can build straightforward networks with these.

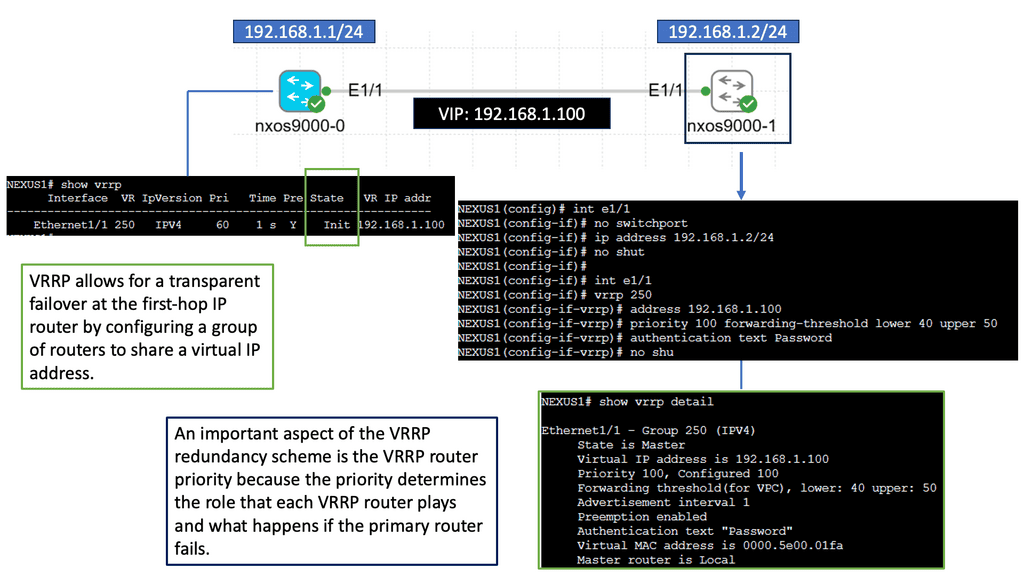

Virtual router redundancy

First Hop Redundancy Protocol (FHRP) now becomes trivial. Cumulus uses Anycast Virtual IP/MAC, eliminating complex FHRP protocols. You do not need a protocol in your MLAG topology to keep your network running. They support a variation of the Virtual Router Redundancy Protocol (VRRP) known as Virtual Router Redundancy (VRR). It’s like VRRP without the protocol and supports an active-active setup. It allows hosts to communicate with redundant routers without dynamic or router protocols.

A Final Point: Merchant Silicon

For years, networking giants relied on proprietary chips to power their devices. However, with the advent of merchant silicon, the landscape has dramatically shifted. Companies like Broadcom, Intel, and Marvell have pioneered the development of these versatile chips, making them accessible to a broader range of manufacturers. This democratization of technology has led to increased competition and innovation in the networking sector, benefiting both businesses and consumers.

One of the primary advantages of merchant silicon is its cost efficiency. By leveraging standardized chips, manufacturers can reduce research and development expenses, leading to more affordable networking solutions. Furthermore, merchant silicon offers enhanced flexibility, enabling companies to adapt their products quickly to meet changing market demands. The interoperable nature of these chips also facilitates seamless integration across different networking devices, ensuring compatibility and ease of deployment.

The rise of software-defined networking (SDN) and network functions virtualization (NFV) has further amplified the role of merchant silicon. These technologies decouple network functions from hardware, allowing them to run on standardized servers powered by merchant silicon. This shift not only reduces costs but also accelerates service deployment, enhances network agility, and simplifies management. As a result, businesses can optimize their network infrastructure to support modern applications and services more efficiently.

While merchant silicon offers numerous benefits, it is not without its challenges. One concern is the potential for reduced differentiation, as multiple manufacturers use the same underlying technology. To mitigate this, companies must focus on developing unique software and features that set them apart from competitors. Additionally, as with any technology, ensuring robust security measures is crucial to protect networks from potential threats and vulnerabilities.

Summary: Merchant Silicon

Merchant Silicon has emerged as a game-changer in the world of network infrastructure. This revolutionary technology is transforming the way data centers and networking systems operate, offering unprecedented flexibility, scalability, and cost-efficiency. In this blog post, we will dive deep into the concept of Merchant Silicon, exploring its origins, benefits, and impact on modern networks.

Understanding Merchant Silicon

Merchant Silicon refers to using off-the-shelf, commercially available silicon chips in networking devices instead of proprietary, custom-built chips. These off-the-shelf chips are developed and manufactured by third-party vendors, providing network equipment manufacturers (NEMs) with a cost-effective and highly versatile alternative to in-house chip development. By leveraging Merchant Silicon, NEMs can focus on software innovation and system integration, streamlining product development cycles and reducing time-to-market.

Key Benefits of Merchant Silicon

Enhanced Flexibility: Merchant Silicon allows network equipment manufacturers to choose from a wide range of silicon chip options, providing the flexibility to select the most suitable chips for their specific requirements. This flexibility enables rapid customization and optimization of networking devices, catering to diverse customer needs and market demands.

Scalability and Performance: Merchant Silicon offers scalability that was previously unimaginable. By incorporating the latest advancements in chip technology from multiple vendors, networking devices can deliver superior performance, higher bandwidth, and lower latency. This scalability ensures that networks can adapt to evolving demands and handle increasing data traffic effectively.

Cost Efficiency: Using off-the-shelf chips, NEMs can significantly reduce manufacturing costs as the chip design and fabrication burden is shifted to specialized vendors. This cost advantage also extends to customers, making network infrastructure more affordable and accessible. The competitive market for Merchant Silicon also drives innovation and price competition among chip vendors, resulting in further cost savings.

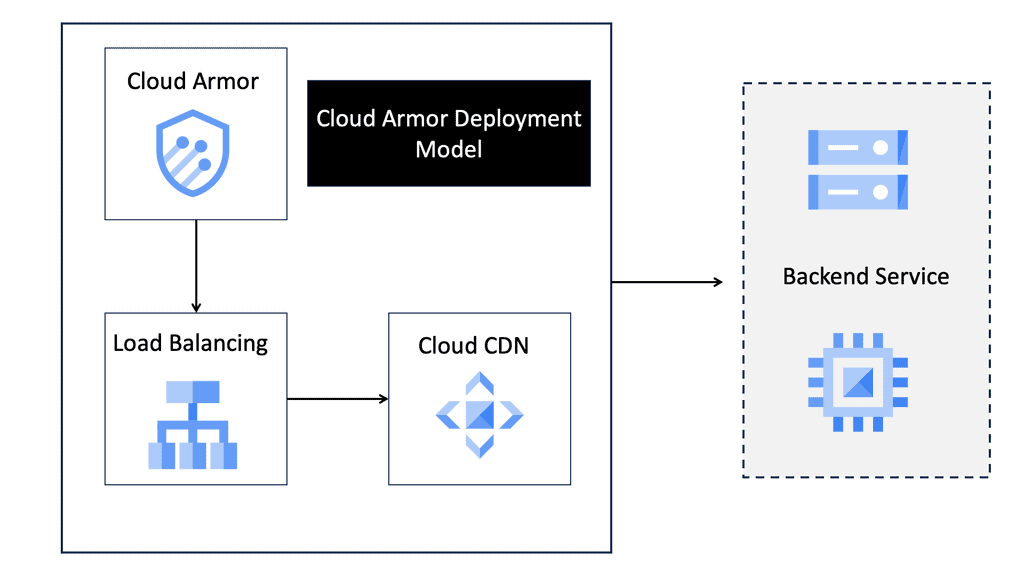

Applications and Industry Impact

Data Centers: Merchant Silicon has revolutionized data center networks by enabling the development of high-performance, software-defined networking (SDN) solutions. These solutions offer unparalleled agility, scalability, and programmability, allowing data centers to manage the increasing complexity of modern workloads and applications efficiently.

Telecommunications: The telecommunications industry has embraced Merchant Silicon to accelerate the deployment of next-generation networks such as 5G. By leveraging the power of off-the-shelf chips, telecommunication companies can rapidly upgrade their infrastructure, deliver faster and more reliable connectivity, and support emerging technologies like edge computing and the Internet of Things (IoT).

Challenges and Future Outlook

Integration and Compatibility: While Merchant Silicon offers numerous benefits, integrating third-party chips into existing network architectures can present compatibility challenges. Close collaboration between chip vendors, NEMs, and software developers ensures seamless integration and optimal performance.

Continuous Innovation: As technology advances, chip vendors must keep pace with the networking industry’s evolving needs. Merchant Silicon’s future lies in the continuous development of cutting-edge chip designs that push the boundaries of performance, power efficiency, and integration capabilities.

Conclusion

In conclusion, Merchant Silicon has ushered in a new era of network infrastructure, empowering NEMs to build highly flexible, scalable, and cost-effective solutions. By leveraging off-the-shelf chips, businesses can unleash their networks’ true potential, adapting to changing demands and embracing future technologies. As chip technology continues to evolve, Merchant Silicon is poised to play a pivotal role in shaping the future of networking.