What is VXLAN

In the rapidly evolving networking world, virtualization has become critical for businesses seeking to optimize their IT infrastructure. One key technology that has emerged is VXLAN (Virtual Extensible LAN), which enables the creation of virtual networks independent of physical network infrastructure. In this blog post, we will delve into the concept of VXLAN, its benefits, and its role in network virtualization.

VXLAN is an encapsulation protocol designed to extend Layer 2 (Ethernet) networks over Layer 3 (IP) networks. It provides a scalable and flexible solution for creating virtualized networks, enabling seamless communication between virtual machines (VMs) and physical servers across different data centers or geographic regions.

VXLAN is a technology that creates virtual networks within an existing physical network. A Layer 2 overlay network runs on top of the current Layer 2 network. VXLAN utilizes UDP as the transport protocol, providing a secure, efficient, and reliable way to create a virtual network.

VXLAN encapsulates the original Layer 2 Ethernet frames within UDP packets, using a 24-bit VXLAN Network Identifier (VNI) to distinguish between different virtual networks. The encapsulated packets are then transmitted over the underlying IP network, enabling the creation of virtualized Layer 2 networks across Layer 3 boundaries.

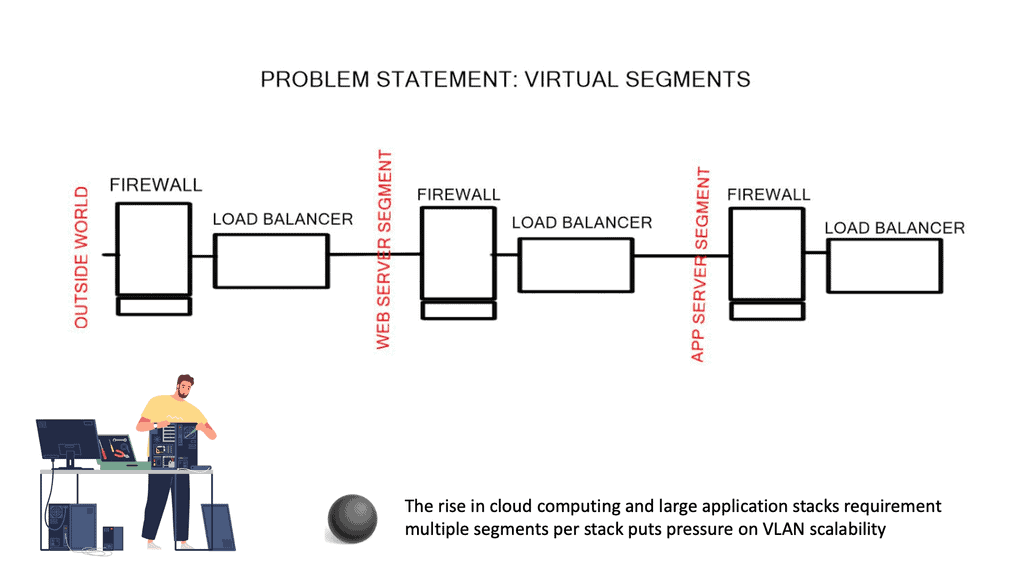

Scalability: VXLAN solves the limitations of traditional VLANs by providing a much larger network identifier space, accommodating up to 16 million virtual networks. This scalability allows for the efficient isolation and segmentation of network traffic in highly virtualized environments.

VXLAN enables the decoupling of virtual and physical networks, providing the flexibility to move virtual machines across different physical hosts or even data centers without the need for reconfiguration. This flexibility greatly simplifies workload mobility and enhances overall network agility.

Multitenancy: With VXLAN, multiple tenants can securely share the same physical infrastructure while maintaining isolation between their virtual networks. This is achieved by assigning unique VNIs to each tenant, ensuring their traffic remains separate and secure.

Underlay Network: VXLAN relies on an IP underlay network, which must provide sufficient bandwidth, low latency, and optimal routing. Careful planning and design of the underlay network are crucial to ensure optimal VXLAN performance.

Network Virtualization Gateway: To enable communication between VXLAN-based virtual networks and traditional VLAN-based networks, a network virtualization gateway, such as a VXLAN Gateway or an overlay-to-underlay gateway, is required. These gateways bridge the gap between virtual and physical networks, facilitating seamless connectivity.Matt Conran

Highlights: What is VXLAN

Understanding VXLAN Basics

It is essential to grasp VXLAN’s fundamental concepts to comprehend it. VXLAN enables the creation of virtualized Layer 2 networks over an existing Layer 3 infrastructure. It uses encapsulation techniques to extend Layer 2 segments over long distances, enabling flexible deployment of virtual machines across physical hosts and data centers.

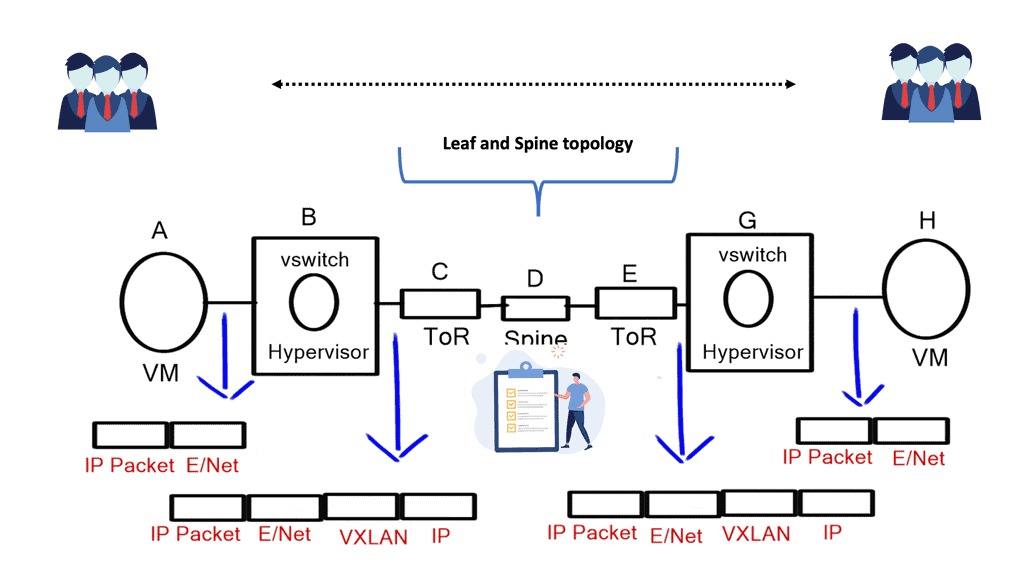

VXLAN Encapsulation: One of the key components of VXLAN is encapsulation. When a virtual machine sends data across the network, VXLAN encapsulates the original Ethernet frame within a new UDP/IP packet. This encapsulated packet is then transmitted over the underlying Layer 3 network, allowing for seamless communication between virtual machines regardless of their physical location.

VXLAN Tunneling: VXLAN employs tunneling to transport the encapsulated packets between VXLAN-enabled devices. These devices, known as VXLAN Tunnel Endpoints (VTEPs), establish tunnels to carry VXLAN traffic. By leveraging tunneling protocols like Generic Routing Encapsulation (GRE) or Virtual Extensible LAN (VXLAN-GPE), VTEPs ensure the delivery of encapsulated packets across the network.

**Benefits of VXLAN**

VXLAN brings numerous benefits to modern network architectures. It enables network virtualization and multi-tenancy, allowing for the efficient and secure isolation of network segments. VXLAN also provides scalability, as it can support a significantly higher number of virtual networks than traditional VLAN-based networks. Additionally, VXLAN facilitates workload mobility and disaster recovery, making it an ideal choice for cloud environments.

**Implementing VXLAN**

VXLAN Implementation Considerations: While VXLAN offers immense advantages, there are a few considerations to consider when implementing it. VXLAN requires network devices that support the technology, including VTEPs and VXLAN-aware switches. It is also crucial to properly configure and manage the VXLAN overlay network to ensure optimal performance and security.

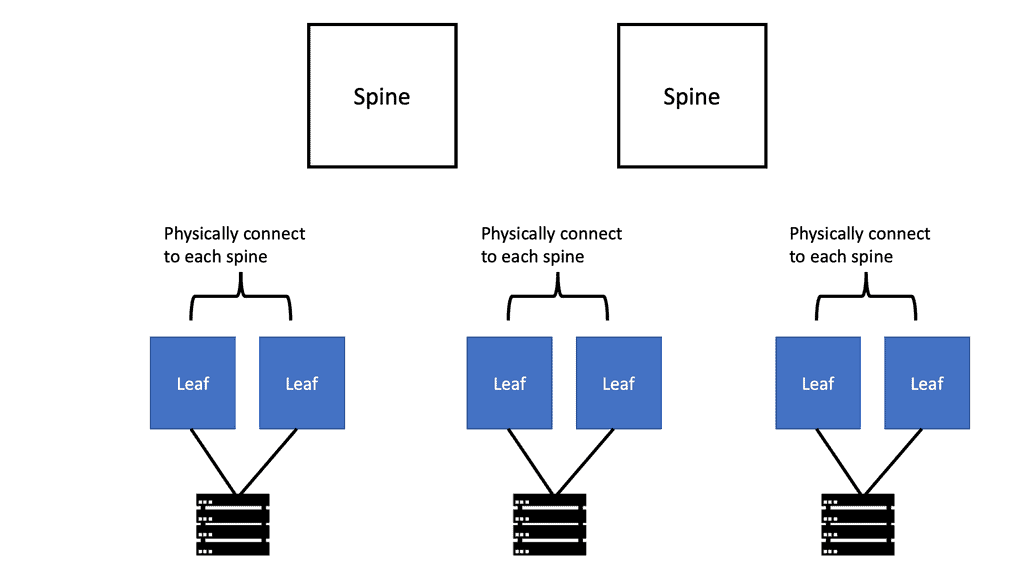

Data centers evolution

In recent years, data centers have seen a significant evolution. This evolution has brought popular technologies such as virtualization, cloud computing (private, public, and hybrid), and software-defined networking (SDN). Mobile-first and cloud-native data centers must scale, be agile, secure, consolidate, and integrate with compute/storage orchestrators. As well as visibility, automation, ease of management, operability, troubleshooting, and advanced analytics, today’s data center solutions are expected to include many other features.

A more service-centric approach is replacing device-by-device management. Most requests for proposals (RFPs) specify open application programming interfaces (APIs) and standards-based protocols to prevent vendor lock-in. A Cisco Virtual Extensible LAN (VXLAN)-based fabric using Nexus switches2 and NX-OS controllers form Cisco Virtual Extensible LAN (VXLAN).

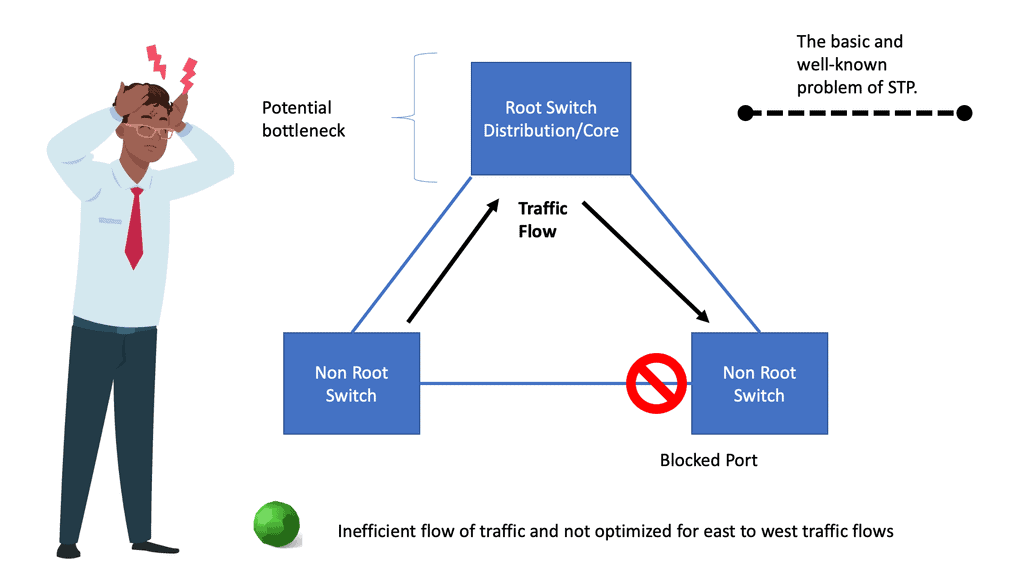

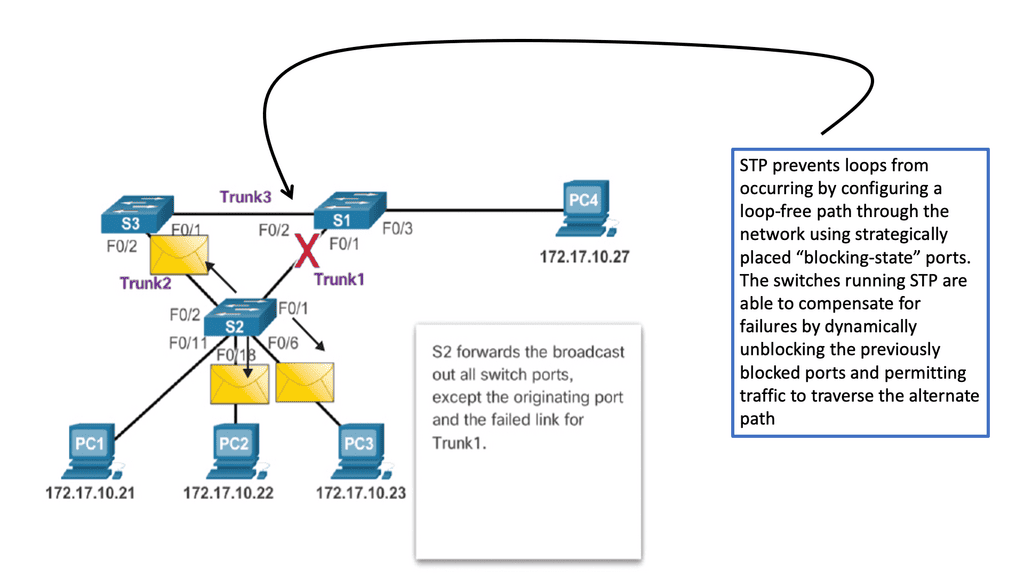

Issues with STP

When a switch receives redundant paths, the spanning tree protocol must designate one of those paths as blocked to prevent loops. While this mechanism is necessary, it can lead to suboptimal network performance. Blocked ports limit bandwidth utilization, which can be particularly problematic in environments with heavy data traffic.

One significant concern with the spanning tree protocol is its slow convergence time. When a network topology changes, the protocol takes time to recompute the spanning tree and reestablish connectivity. During this convergence period, network downtime can occur, disrupting critical operations and causing frustration for users.

What is VXLAN?

The Internet Engineering Task Force (IETF) developed VXLAN, or Virtual eXtensible Local-Area Network, as a network virtualization technology standard. Multi-tenant networks allow multiple organizations to share a physical network without accessing each other’s traffic.

The VXLAN can be compared to individual apartment apartments: each apartment is a separate, private dwelling within a shared physical structure, just as each VXLAN is a discrete, private network segment within a shared physical infrastructure.

With VXLANs, physical networks can be segmented into 16 million logical networks. To encapsulate Layer 2 Ethernet frames, User Datagram Protocol (UDP) packets with a VXLAN header are used. Combining VXLAN with Ethernet virtual private networks (EVPNs), which transport Ethernet traffic over WAN protocols, allows Layer 2 networks to be extended across Layer 3 IP or MPLS networks.

**Benefits of VXLAN:**

– Scalability: VXLAN allows creating up to 16 million logical networks, providing the scalability required for large-scale virtualized environments.

– Network Segmentation: By leveraging VXLAN, organizations can segment their networks into virtual segments, enhancing security and isolating traffic between applications or user groups.

– Flexibility and Mobility: VXLAN enables the movement of VMs across physical servers and data centers without the need to reconfigure network settings. This flexibility is crucial for workload mobility in dynamic environments.

– Interoperability: VXLAN is an industry-standard protocol supported by various networking vendors, ensuring compatibility across different network devices and platforms.

**Use Cases for VXLAN**

– Data Center Interconnect (DCI): VXLAN allows organizations to interconnect multiple data centers, enabling seamless workload migration, disaster recovery, and workload balancing across different locations.

– Multi-Tenant Environments: VXLAN enables service providers to offer virtualized network services to multiple tenants securely and isolatedly. This is particularly useful in cloud computing environments.

– Network Virtualization: VXLAN plays a crucial role in network virtualization, allowing organizations to create virtual networks independent of the underlying physical infrastructure. This enables greater flexibility and agility in managing network resources.

**VXLAN vs. GRE**

VXLAN, an overlay network technology, is designed to address the limitations of traditional VLANs. It enables the creation of virtual networks over an existing Layer 3 infrastructure, allowing for more flexible and scalable network deployments. VXLAN operates by encapsulating Layer 2 Ethernet frames within UDP packets, extending Layer 2 domains across Layer 3 boundaries.

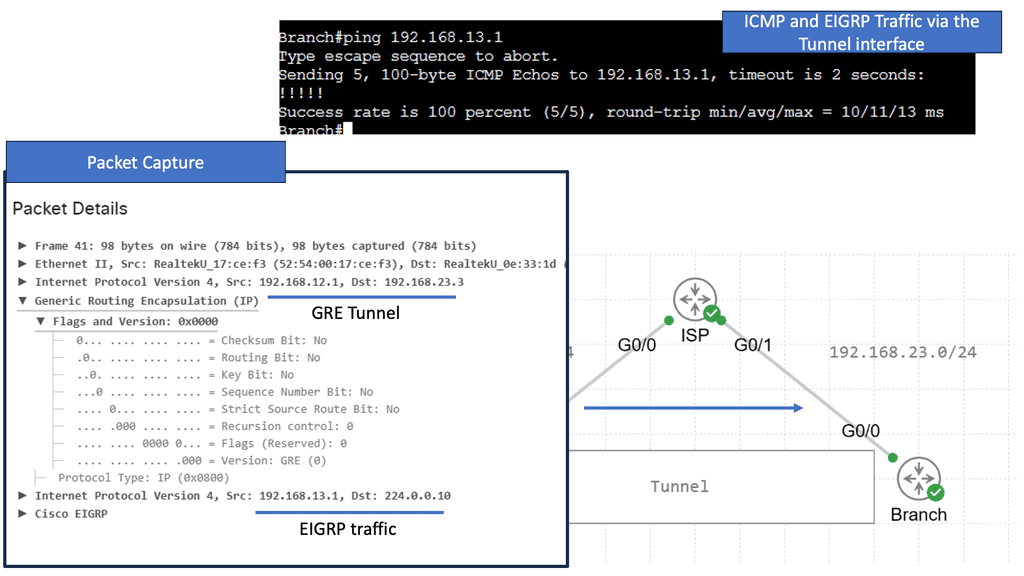

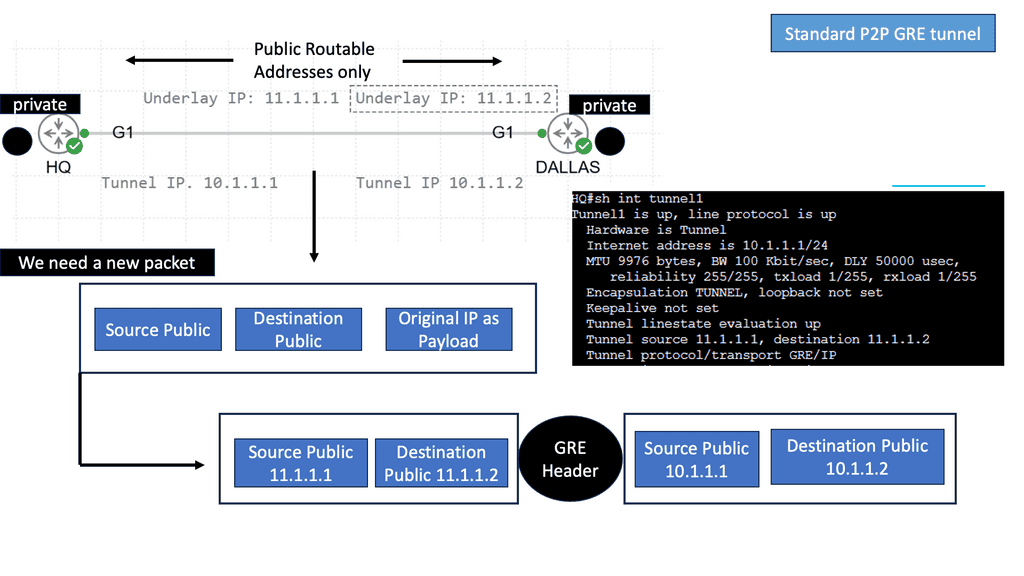

GRE, on the other hand, is a simple IP packet encapsulation protocol. It provides a mechanism for encapsulating arbitrary protocols over an IP network and is widely used for creating point-to-point tunnels. GRE encapsulates the payload packets within IP packets, making it a versatile option for connecting remote networks securely.

Point-to-point GRE networks serve as a foundational element in modern networking. They allow for encapsulation and efficient transmission of various protocols over an IP network. Point-to-point GRE networks enable seamless communication and data transfer by establishing a direct virtual link between two endpoints.

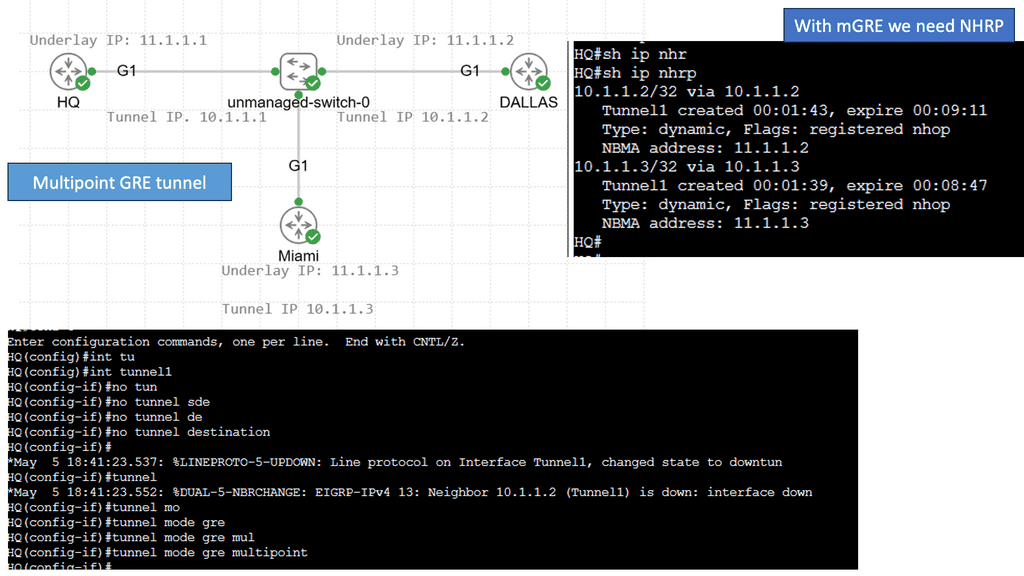

Understanding mGRE

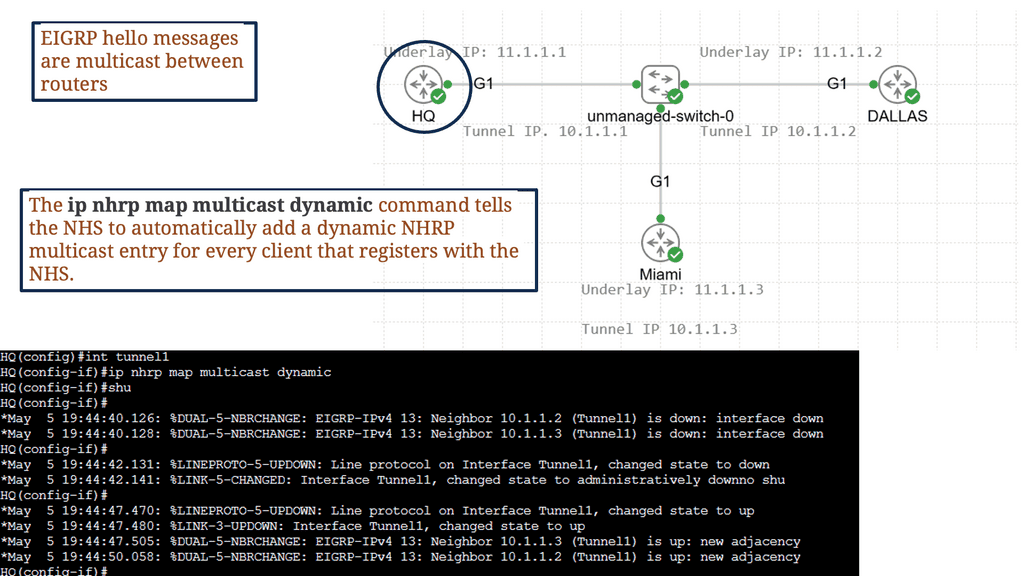

mGRE serves as the foundation for building DMVPN networks. It allows multiple sites to communicate with each other over a shared public network infrastructure while maintaining security and scalability. By utilizing a single mGRE tunnel interface on a central hub router, multiple spoke routers can dynamically establish and tear down tunnels, enabling seamless communication across the network.

The utilization of mGRE within DMVPN offers several key advantages. First, it simplifies network configuration by eliminating the need for point-to-point tunnels between each spoke router. Second, mGRE provides scalability, allowing for the dynamic addition or removal of spoke routers without impacting the overall network infrastructure. Third, mGRE enhances network resiliency by supporting multiple paths and providing load-balancing mechanisms.

Key VXLAN advantages

Because VXLANs are encapsulated inside UDP packets, they can run on any network that can send UDP packets. No matter how physically or geographically far a VTEP is from the decapsulating VTEP, it must forward UDP datagrams.

VXLAN and EVPN enable operators to create virtual networks from physical ports on any Layer 3 network switch supporting the standard. Connecting a port on switch A to two ports on switch B and another port on switch C creates a virtual network that appears to all connected devices as one physical network. Devices in this virtual network cannot see VXLANs or the underlying network fabric.

**Problems that VXLAN solves**

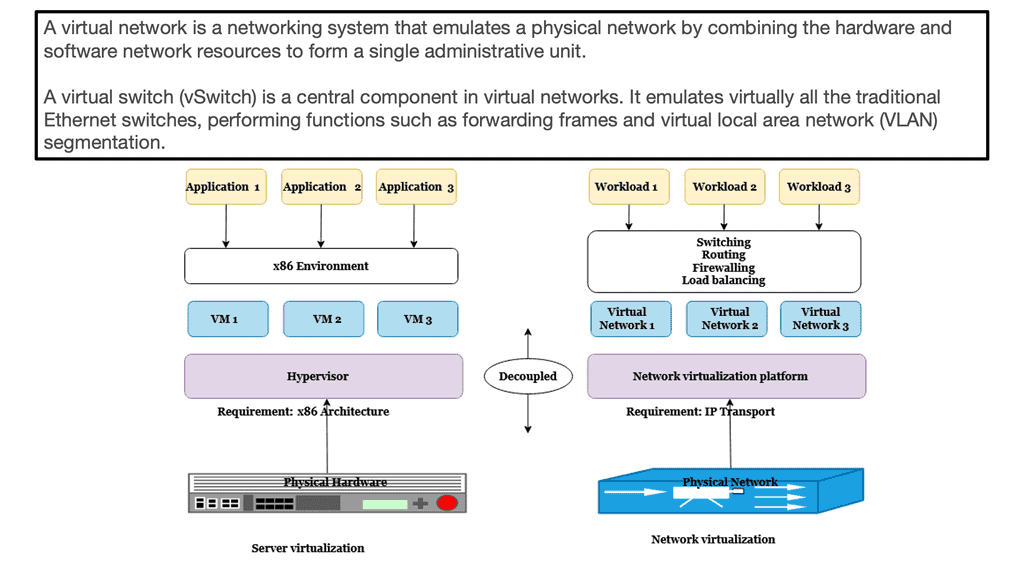

In the same way, as server virtualization has increased agility and flexibility, decoupling virtual networks from physical infrastructure has done the same. Therefore, network operators can scale their infrastructure rapidly and economically to meet growing demand while securely sharing a single physical network. For privacy and security reasons, networks are segmented to prevent one tenant from seeing or accessing the traffic of another.

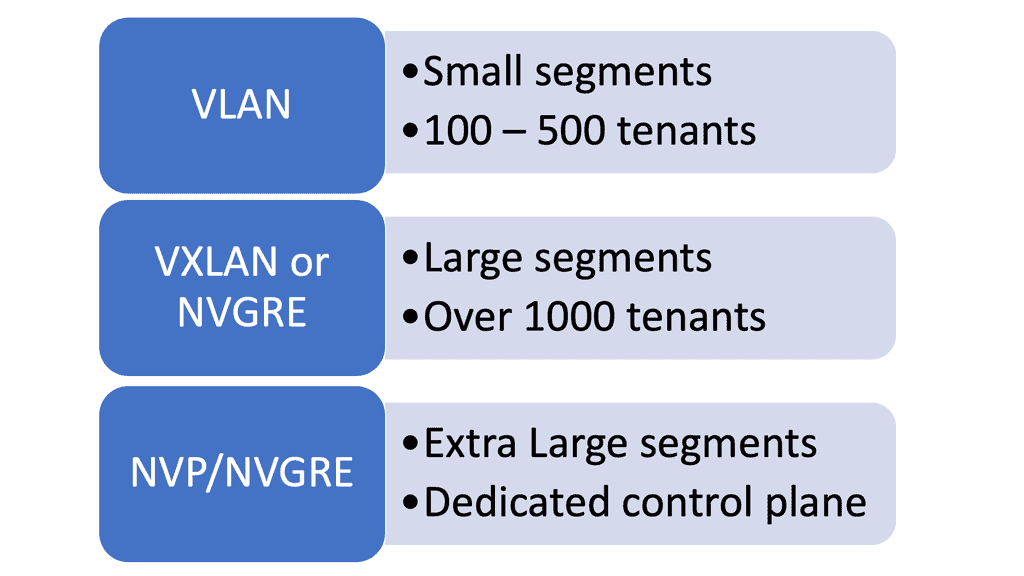

In a similar way to traditional virtual LANs (VLANs), VXLANs enable operators to overcome the scaling limitations associated with VLANs.

- Up to 16 million VXLANs can be created in an administrative domain, compared to 4094 traditional VLANs. Cloud and service providers can segment networks using VXLANs to support many tenants.

- By using a VXLAN, you can create network segments between different data centers. In traditional VLAN networks, broadcast domains are created by segmenting traffic by VLAN tags, but once a packet containing VLAN tags reaches a router, the VLAN information is removed. There is no limit to the distance VLANs can travel within a Layer 2 network. Layer 3 boundaries, such as virtual machine migration, are generally avoided in certain use cases. Segmenting networks based on VXLAN encapsulates packets as UDP packets, while segmenting networks based on VXLAN encapsulates packets as IP packets. A virtual overlay network can extend as far as the physical Layer 3 routed network can reach when all switches and routers in the path support VXLAN without the applications running on the overlay network having to cross any Layer 3 boundaries. Servers connected to the network are still part of the Layer 2 network, even though UDP packets may have transited one or more routers.

- Using Layer 2 segmentation on top of an underlying Layer 3 network allows one to segment a Layer 2 network over an underlying Layer 3 network and support many network segments. By providing Layer 2 segmentation on top of an underlying Layer 3 network, Layer 2 networks can remain small even if they are distant. Smaller Layer 2 networks can prevent MAC table overflows on switches.

Primary VXLAN applications

A service provider or cloud provider deploys VXLAN for apparent reasons: they have many tenants or customers, and they must separate the traffic of one customer from another due to legal, privacy, and ethical considerations.

Users, departments, or other groups of network-segmented devices may be tenants in enterprise environments for security reasons. Isolating IoT network traffic from production network applications is a good security practice for Internet of Things (IoT) devices such as data center environmental sensors.

VXLAN has been widely adopted and is now used in many large enterprise networks for virtualization and cloud computing. It provides:

- A secure and efficient way to create virtual networks.

- Allowing for the creation of multi-tenant segmentation.

- Efficient routing.

- Hardware-agnostic capabilities.

With its widespread adoption, VXLAN has become an essential technology for network virtualization.

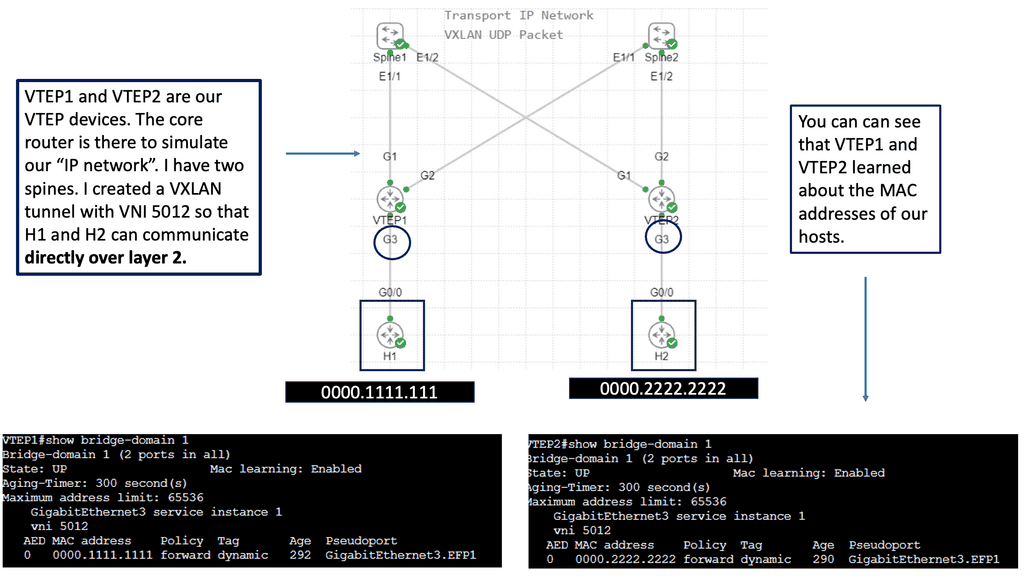

Example: VXLAN Flood and Learn

Understanding VXLAN Flood and Learn

VXLAN flood and learn handles unknown unicast, multicast, and broadcast traffic in VXLAN networks. It allows the network to learn and forward traffic to the appropriate destination without relying on traditional flooding techniques. By leveraging multicast, VXLAN flood and learn improves efficiency and reduces the network’s reliance on flooding every unknown packet.

Proper multicast group management is essential to implementing VXLAN flood and learning with multicast. VXLAN uses multicast groups to distribute unknown traffic efficiently within the network.

VXLAN flood and learn with multicast offers several benefits for data center networks. Firstly, it reduces the flooding of unknown traffic, which helps minimize network congestion and improves overall performance. Additionally, it allows for better scalability by avoiding the need to flood every unknown packet to all VTEPs (VXLAN Tunnel Endpoint). This results in more efficient network utilization and reduced processing overhead.

Related: Before you proceed, you may find the following posts helpful for pre-information:

What is VXLAN

Traditional layer two networks have issues because of the following reasons:

- Spanning tree: Restricts links.

- Limited amount of VLANs: Restricts scalability;

- Large MAC address tables: Restricts scalability and mobility

Spanning-tree avoids loops by blocking redundant links. By blocking connections, we create a loop-free topology and pay for links we can’t use. Although we could switch to a layer three network, some technologies require layer two networking.

VLAN IDs are 12 bits long, so we can create 4094 VLANs (0 and 4095 are reserved). Data centers may need help with only 4094 available VLANs. Let’s say we have a service provider with 500 customers. There are 4094 available VLANs, so each customer can only have eight.

The Role of Server Virtualization

Server virtualization has exponentially increased the number of addresses in our switches’ MAC addresses. Before server virtualization, there was only one MAC address per switch port. With server virtualization, we can run many virtual machines (VMs) or containers on a single physical server. Virtual NICs and virtual MAC addresses are assigned to each virtual machine. One switch port must learn many MAC addresses.

A data center could connect 24 or 48 physical servers to a top-of-rack (ToR) switch. Since there may be many racks in a data center, each switch must store the MAC addresses of all VMs that communicate. Networks without server virtualization require much larger MAC address tables.

Guide: VXLAN

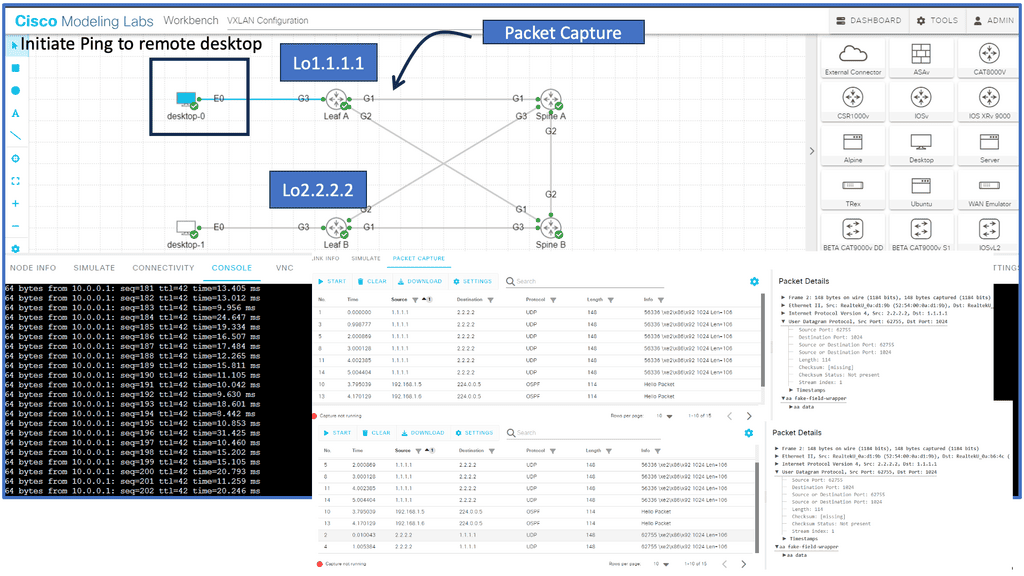

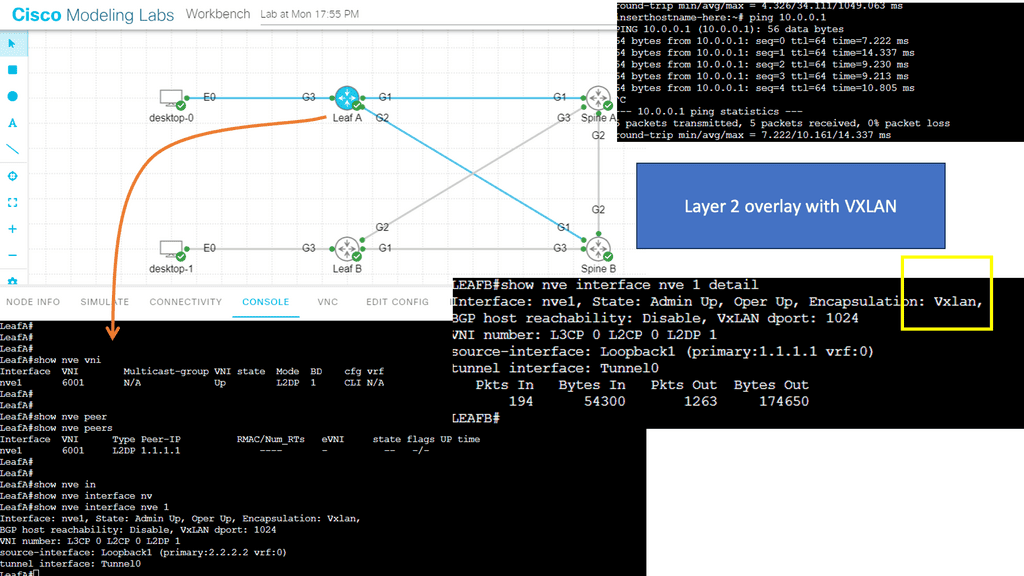

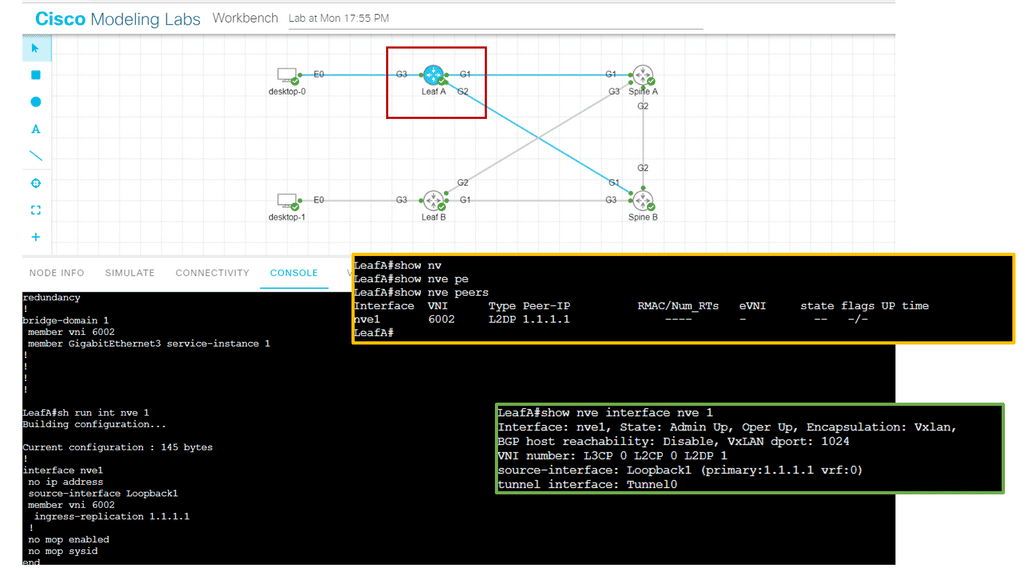

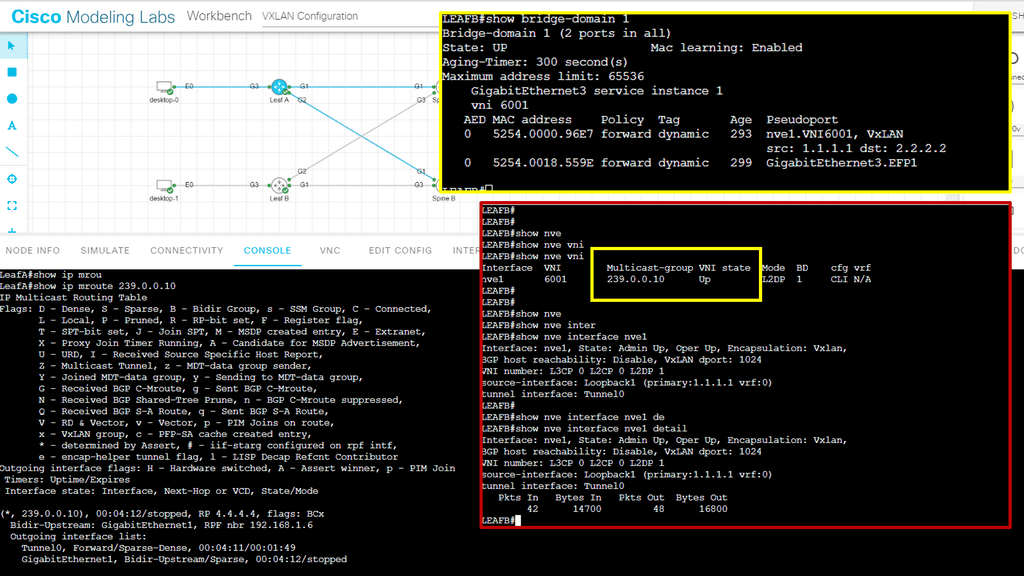

In the following lab, I created a Layer 2 overlay with VXLAN over a Layer 3 core. A bridge domain VNI of 6001 must match both sides of the overlay tunnel. What Is a VNI? The VLAN ID field in an Ethernet frame has only 12 bits, so VLAN cannot meet isolation requirements on data center networks. The emergence of VNI specifically solves this problem.

Note: The VNI

A VNI is a user identifier similar to a VLAN ID. A VNI identifies a tenant. VMs with different VNIs cannot communicate at Layer 2. During VXLAN packet encapsulation, a 24-bit VNI is added to a VXLAN packet, enabling VXLAN to isolate many tenants.

In the screenshot below, you will notice that I can ping from desktop 0 to desktop one even though the IP addresses are not in the routing table of the core devices, simulating a Layer 2 overlay. Consider VXLAN to be the overlay and the routing Layer 3 core to be the underlay.

In the following screenshot, notice that the VNI has been changed. The VNI needs to be changed in two places in the configuration, as illustrated below. Once changed, the Peers are down; however, the NVE interface remains up. The VXLAN layer two overlay is not operational.

How does VXLAN work?

VXLAN uses tunneling to encapsulate Layer 2 Ethernet frames within IP packets. Each VXLAN network is identified by a unique 24-bit segment ID, the VXLAN Network Identifier (VNI). The source VM encapsulates the original Ethernet frame with a VXLAN header, including the VNI. The encapsulated packet is then sent over the physical IP network to the destination VM and decapsulated to retrieve the original Ethernet frame.

Analysis:

Notice below that it is running a ping from desktop 0 to desktop 1. The IP addresses assigned to this host are 10.0.0.1 and 10.0.0.2. First, notice that the ping is booming. When I do a packet capture on the links Gi1 connected to Leaf A, we see the encapsulation of the ICMP echo request and reply.

Everything is encapsulated into UDP port 1024. In my configurations of Leaf A and Leaf B, I explicitly set the VXLAN port to 1024.

XLAN and Network Virtualization.

VXLAN and network virtualization

VXLAN is a form of network virtualization. Network virtualization cuts a single physical network into many virtual networks, often called network overlays. Virtualizing a resource allows it to be shared by multiple users. Virtualization provides the illusion that each user is on his or her resources.

In the case of virtual networks, each user is under the misconception that there are no other users of the network. To preserve the illusion, virtual networks are separated from one another. Packets cannot leak from one virtual network to another.

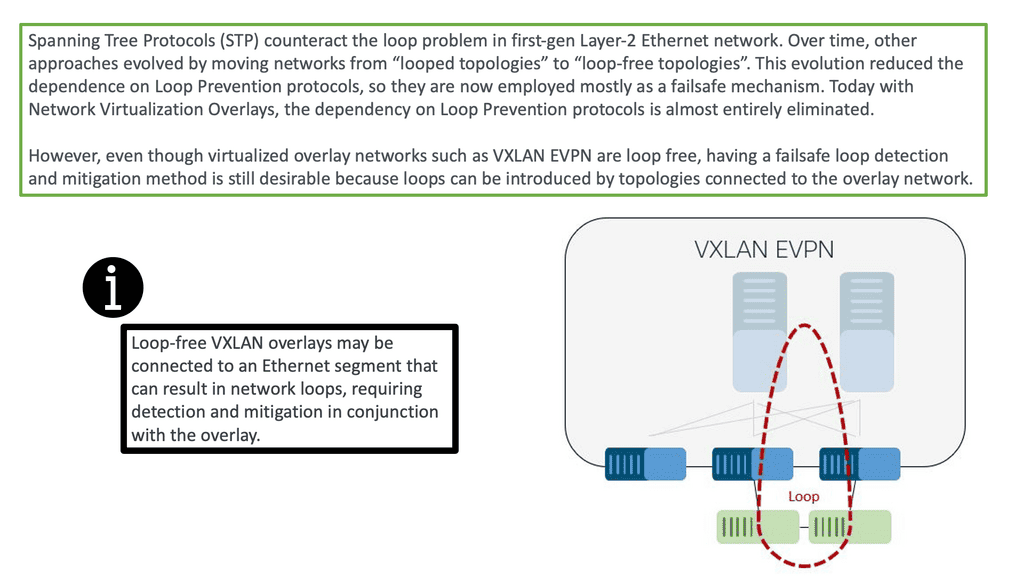

VXLAN Loop Detection and Prevention

So, before we dive into the benefits of VXLAN, let us address the basics of loop detection and prevention, which is a significant driver for using network overlays such as VLXAN. The challenge is that data frames can exist indefinitely when loops occur, disrupting network stability and degrading performance.

In addition, loops introduce broadcast radiation, increasing CPU and network bandwidth utilization, which degrades user application access experience. Finally, in multi-site networks, a loop can span multiple data centers, causing disruptions that are difficult to pinpoint. Overlay networking can solve much of this.

VXLAN vs VLAN

However, first-generation Layer-2 Ethernet networks could not natively detect or mitigate looped topologies, while modern Layer-2 overlays implicitly build loop-free topologies. Therefore, overlays do not need loop detection and mitigation as long as no first-gen Layer-2 network is attached. Essentially, there is no need for a VXLAN spanning tree.

So, one of the differences between VXLAN vs VLAN is that the VLAN has a 12-bit VID while VXLAN has a 24-bit VID network identifier, allowing you to create up to 16 million segments. VXLAN has tremendous scale and stable loop-free networking and is a foundation technology in the ACI Cisco.

VXLAN and Data Center Interconnect

VXLAN has revolutionized data center interconnect by providing a scalable, flexible, and efficient solution for extending Layer 2 networks. Its ability to enable network segmentation, multi-tenancy support, and seamless mobility makes it a valuable technology for modern businesses.

However, careful planning, consideration of network infrastructure, and security measures are essential for successful implementation. By harnessing the power of VXLAN, organizations can achieve a more agile, scalable, and interconnected data center environment.

VXLAN vs VLAN: The VXLAN Benefits Drive Adoption

Introduced by Cisco and VMware and now heavily used in open networking, VXLAN stands for Virtual eXtensible Local Area Network. It is perhaps the most popular overlay technology for IP-based SDN data centers and is used extensively with ACI networks.

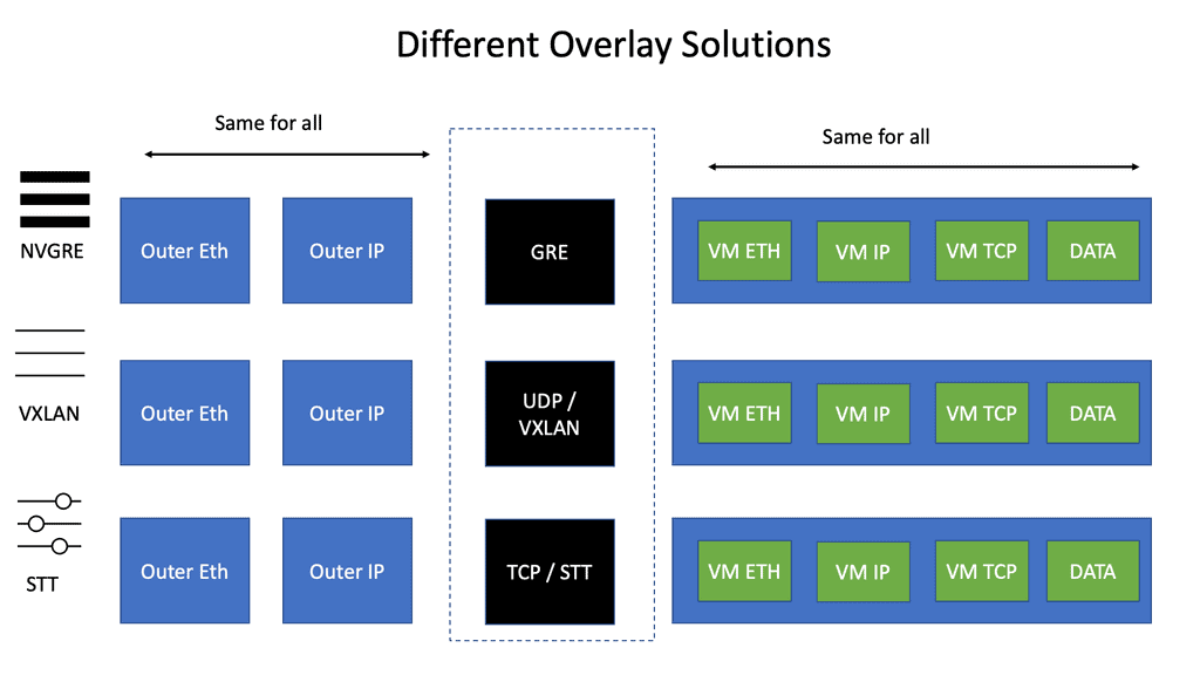

VXLAN was explicitly designed for Layer 2 over Layer 3 tunneling. Its early competition from NVGRE and STT is fading away, and VXLAN is becoming the industry standard. VLXAN brings many advantages, especially in loop prevention, as there is no need for a VXLAN spanning tree.

Today, overlays such as VXLAN almost eliminate the dependency on loop prevention protocols. However, even though virtualized overlay networks such as VXLAN are loop-free, having a failsafe loop detection and mitigation method is still desirable because loops can be introduced by topologies connected to the overlay network.

Loop prevention traditionally started with Spanning Tree Protocols (STP) to counteract the loop problem in first-gen Layer-2 Ethernet networks. Over time, other approaches evolved by moving networks from “looped topologies” to “loop-free topologies.

While LAG and MLAG were used, other approaches for building loop-free topologies arose using ECMP at the MAC or IP layers. For example, FabricPath or TRILL is a MAC layer ECMP approach that emerged in the last decade. More recently, network virtualization overlays that build loop-free topologies on top of IP layer ECMP became state-of-the-art.

VXLAN vs VLAN: Why Introduce VXLAN?

- STP issues and scalability constraints: STP is undesirable on a large scale and lacks a proper load-balancing mechanism. A solution was needed to leverage the ECMP capabilities of an IP network while offering extended VLANs across an IP core, i.e., virtual segments across the network core. There is no VXLAN spanning tree.

- Multi-tenancy: Layer 2 networks are capped at 4000 VLANs, restricting multi-tenancy design—a big difference in the VXLAN vs VLAN debates.

- ToR table scalability: Every ToR switch may need to support several virtual servers, and each virtual server requires several NICs and MAC addresses. This pushes the limits on the ToR switch’s table sizes. In addition, after the ToR tables become full, Layer 2 traffic will be treated as unknown unicast traffic, which will be flooded across the network, causing instability to a previously stable core.

VXLAN use cases

Use Case | VXLAN Details |

Use Case 1 | Multi-tenant IaaS Clouds where you need a large number of segments |

Use Case 2 | Link Virtual to Physical Servers. This is done via software or hardware VXLAN to VLAN gateway |

Use Case 3 | HA Clusters across failure domains/availability zones |

Use Case 4 | VXLAN works well over fabrics that have equidistant endpoints |

Use Case 5 | VXLAN-encapsulated VLAN traffic across availability zones must be rate-limited to prevent broadcast storm propagation across multiple availability zones |

What is VXLAN? The operations

When discussing VXLAN vs VLAN, VXLAN employs a MAC over IP/UDP overlay scheme and extends the traditional VLAN boundary of 4000 VLANs. The 12-bit VLAN identifier in traditional VLANs capped scalability within the SDN data center and proved cumbersome if you wanted a VLAN per application segment model. VXLAN scales the 12-bit to a 24-bit identifier and allows for 16 million logical endpoints, with each endpoint potentially offering another 4,000 VLANs.

While tunneling does provide Layer 2 adjacency between these logical endpoints and allows VMs to move across boundaries, the main driver for its insertion was to overcome the challenge of having only 4000 VLAN.

Typically, an application segment has multiple segments; between each segment, you will have firewalling and load-balancing services, and each segment requires a different VLAN. The Layer 2 VLAN segment transfers non-routable heartbeats or state information that can’t cross an L3 boundary. You will soon reach the 4000k VLAN limit if you are a cloud provider.

The control plane

The control plane is very similar to the spanning tree control plane. If a switch receives a packet destined for an unknown address, the switch will forward the packet to an IP address that floods the packet to all the other switches.

This IP address is, in turn, mapped to a multicast group across the network. VXLAN doesn’t explicitly have a control plane and requires an IP multicast running in the core for forwarding traffic and host discovery.

**Best practices for enabling IP Multicast in the core**

IP Multicast | In the Core |

| |

| |

| |

| |

|

The requirement for IP multicast in the core made VXLAN undesirable from an operation point of view. For example, creating the tunnel endpoints is simple, but introducing a protocol like IP multicast to a core just for the tunnel control plane was considered undesirable. As a result, some of the more recent versions of VXLAN support IP unicast.

VXLAN uses a MAC over IP/UDP solution to eliminate the need for a spanning tree. There is no VXLAN spanning tree. This enables the core to be IP and not run a spanning tree. Many people ask why VXLAN uses UDP. The reason is that the UDP port numbers cause VXLAN to inherit Layer 3 ECMP features. The entropy that enables load balancing across multiple paths is embedded into the UDP source port of the overlay header.

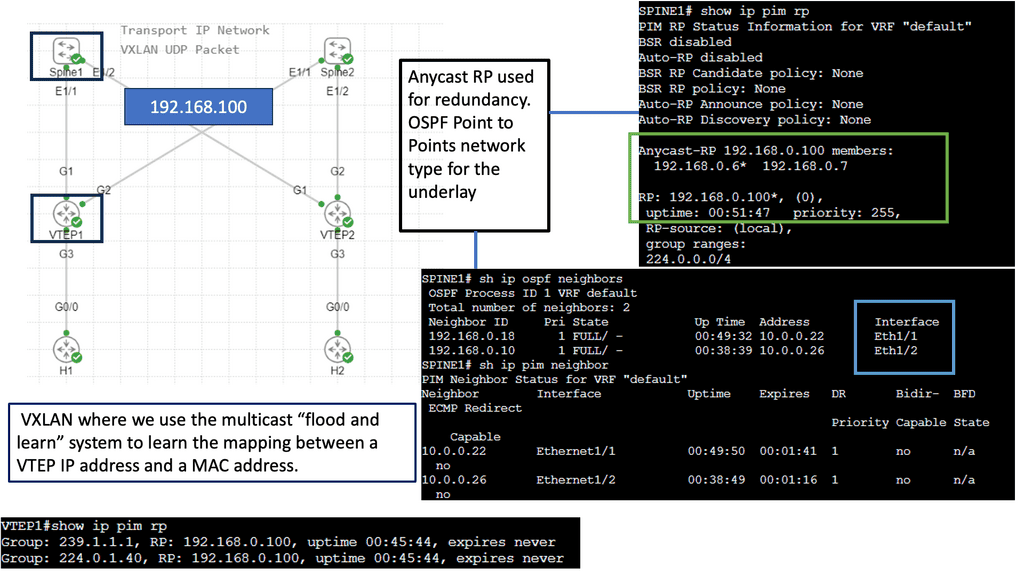

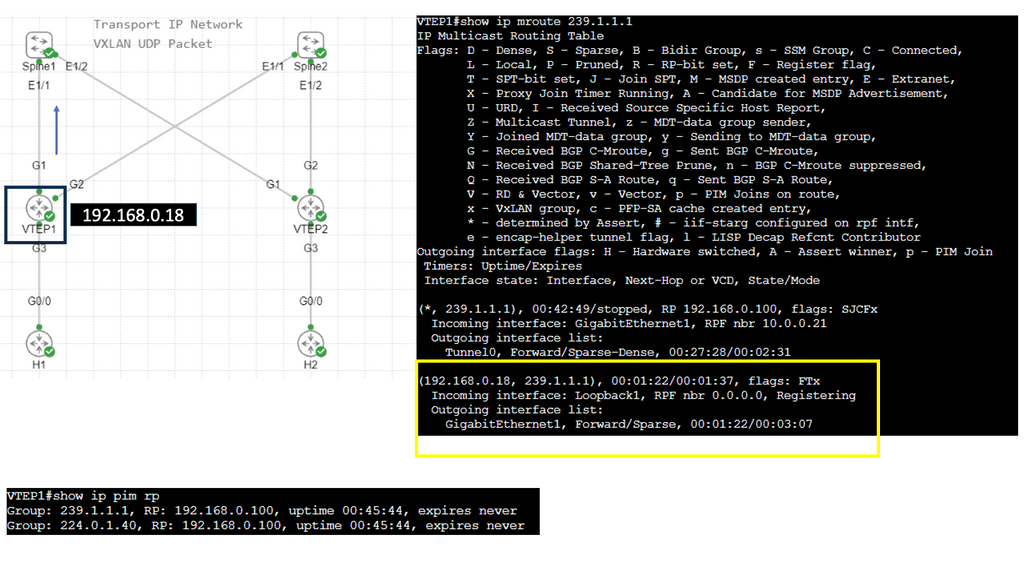

2nd Lab Guide: Multicast VLXAN

In this lab guide, we will look at a VXLAN multicast mode. The multicat mode requires both unicast and multicast connectivity between sites. Similar to the previous one, this configuration guide uses OSPF to provide unicast connectivity, and now we have an additional bidirectional Protocol Independent Multicast (PIM) to provide multicast connectivity.

This does not mean that you don’t have a multicast-enabled core. It would be best if you still had multicast enabled on the core.

So we are not tunneling multicast over an IPv4 core without having multicast enabled on the core. I have multicast on all Layer 3 interfaces, and the mroute table is populated on all Layer 3 routers. With the command: Show ip mroute, we are tunneling the multicast traffic, and with the command: Show nve vni, we have multicast group 239.0.0.10 and a state of UP.

VXLAN benefits and stability

The underlying control plan network impacts the stability of VXLAN and the applications running within it. For example, if the underlying IP network cannot converge quickly enough, VLXAN packets may be dropped, and an application cache timeout may be triggered.

The rate of change in the underlying network significantly impacts the stability of the tunnels, yet the rate and change of the tunnels do not affect the underlying control plane. This is similar to how the strength of an MPLS / VPN overlay is affected by the core’s IGP.

VXLAN Points | VXLAN benefits | VXLAN drawbacks |

Point 1 | Runs over IP Transport | No control plane |

Point 2 | Offers a large number of logical endpoints | Needs IP Multicast*** |

Point 3 | Reduced flooding scope | No IGMP snooping ( yet ) |

Point 4 | Eliminates STP | No Pvlan support |

Point 5 | Easily integrated over existing Core | Requires Jumbo frames in the core ( 50 bytes) |

Point 6 | Minimal host-to-network integration | No built-in security features ** |

Point 7 | Not a DCI solution ( no arp reduction, first-hop gateway localization, no inbound traffic steering i.e, LISP ) |

** VXLAN has no built-in security features. Anyone who gains access to the core network can insert traffic into segments. The VXLAN transport network must be secure, as no existing firewall or intrusion prevention system (IPS) equipment can be seen in the VXLAN traffic.

*** Recent versions have Unicast VXLAN. Nexus 1000V release 4.2(1)SV2(2.1)

Updated: VXLAN enhancements

MAC distribution mode is an enhancement to VXLAN that prevents unknown unicast flooding and eliminates data plane MAC address learning. Traditionally, this was done by flooding to locate an unknown end host, but it has now been replaced with a control plane solution.

During VM startup, the VSM ( control plane ) collects the list of MAC addresses and distributes the MAC-to-VTEP mappings to all VEMs participating in a VXLAN segment. This technique makes VXLAN more optimal by unicasting more intelligently, similar to Nicira and VMware NVP.

ARP termination works by giving the VSM controller all the ARP and MAC information. This enables the VSM to proxy and respond locally to ARP requests without sending a broadcast. Because 90% of broadcast traffic is ARP requests ( ARP reply is unicast ), this significantly reduces broadcast traffic on the network.

Closing Points on VXLAN

VXLAN is a network virtualization technology that encapsulates Ethernet frames within UDP packets, allowing the creation of a virtual network overlay. This overlay extends beyond traditional VLANs, providing more address space and enabling communication across different physical networks. With a 24-bit segment ID, VXLAN supports up to 16 million unique network segments, compared to the 4096 segments possible with VLANs.

At its core, VXLAN uses tunneling to encapsulate Layer 2 frames within Layer 3 packets. This process involves two key components: the VXLAN Tunnel Endpoint (VTEP) and the VXLAN header. VTEPs are responsible for encapsulating and decapsulating the Ethernet frames. They also handle the communication between different network segments, ensuring seamless data transmission across the network.

The adoption of VXLAN brings several advantages to network management:

1. **Scalability**: VXLAN’s ability to support millions of network segments allows for unprecedented scalability, making it ideal for large-scale data centers.

2. **Flexibility**: By decoupling the network overlay from the physical infrastructure, VXLAN enables flexible network designs that can adapt to changing business needs.

3. **Improved Resource Utilization**: VXLAN optimizes the use of network resources, enhancing performance and reducing congestion by spreading traffic across multiple paths.

VXLAN is particularly beneficial in scenarios where network scalability and flexibility are paramount. Common use cases include:

– **Data Center Interconnect**: Connecting multiple data centers over a single, unified network fabric.

– **Multi-Tenant Environments**: Providing isolated network segments for different tenants in cloud environments.

– **Disaster Recovery**: Facilitating seamless failover and recovery by extending network segments across geographically dispersed locations.

Summary: What is VXLAN

VXLAN, short for Virtual Extensible LAN, is a network virtualization technology that has recently gained significant popularity. In this blog post, we will examine VXLAN’s definition, workings, and benefits. So, let’s dive into the world of VXLAN!

Understanding VXLAN Basics

VXLAN is an encapsulation protocol that enables the creation of virtual networks over existing Layer 3 infrastructures. It extends Layer 2 segments over Layer 3 networks, allowing for greater flexibility and scalability. By encapsulating Layer 2 frames within Layer 3 packets, VXLAN enables efficient communication between virtual machines (VMs) across physical hosts or data centers.

VXLAN Operation and Encapsulation

To understand how VXLAN works, we must look at its operation and encapsulation process. When a VM sends a Layer 2 frame, it is encapsulated into a VXLAN packet by adding a VXLAN header. This header includes information such as the VXLAN network identifier (VNI), which helps identify the virtual network to which the packet belongs. The VXLAN packet is then transported over the underlying Layer 3 network to the destination physical host, encapsulated, and delivered to the appropriate VM.

Benefits and Use Cases of VXLAN

VXLAN offers several benefits that make it an attractive choice for network virtualization. Firstly, it enables the creation of large-scale virtual networks, allowing for seamless VM mobility and workload placement flexibility. VXLAN also helps overcome the limitations of traditional VLANs by providing a much larger address space, accommodating the ever-growing number of virtual machines in modern data centers. Additionally, VXLAN facilitates network virtualization across geographically dispersed data centers, making it ideal for multi-site deployments and disaster recovery scenarios.

VXLAN vs. Other Network Virtualization Technologies

While VXLAN is widely used, it is essential to understand its key differences and advantages compared to other network virtualization technologies. For instance, VXLAN offers better scalability and flexibility than traditional VLANs. It also provides better isolation and segmentation of virtual networks, making it an ideal choice for multi-tenant environments. Additionally, VXLAN is agnostic to the physical network infrastructure, allowing it to be easily deployed in existing networks without requiring significant changes.

Conclusion:

In conclusion, VXLAN is a powerful network virtualization technology that has revolutionized how virtual networks are created and managed. Its ability to extend Layer 2 networks over Layer 3 infrastructures, scalability, flexibility, and ease of deployment make VXLAN a go-to solution for modern data centers. Whether for workload mobility, multi-site implementations, or overcoming VLAN limitations, VXLAN offers a robust and efficient solution. Embracing VXLAN can unlock new possibilities in network virtualization, enabling organizations to build agile, scalable, and resilient virtual networks.

- DMVPN - May 20, 2023

- Computer Networking: Building a Strong Foundation for Success - April 7, 2023

- eBOOK – SASE Capabilities - April 6, 2023