Kubernetes PetSets

In the rapidly evolving landscape of container orchestration, Kubernetes has emerged as a powerful tool for managing and scaling applications. While it excels at handling stateless workloads, managing stateful applications has traditionally been more complex. However, with the introduction of Kubernetes Petsets, a new paradigm has emerged to simplify the management of stateful applications.

Stateful applications, unlike their stateless counterparts, require persistent storage and unique network identities. They maintain data and state across restarts, making them crucial for databases, queues, and other similar workloads. However, managing stateful applications in a distributed environment can be challenging, leading to potential data loss and inconsistencies.

Introducing Kubernetes Petsets: Kubernetes Petsets provide a solution for managing stateful applications within a Kubernetes cluster. Petsets ensure that each pod in the set has a unique identity, stable network hostname, and ordered deployment. This allows for predictable scaling, rolling updates, and seamless recovery in case of failures. With Petsets, you can now easily manage stateful applications in a declarative manner, leveraging the power of Kubernetes.

Petsets offer several key features that simplify the management of stateful applications. Firstly, they ensure ordered pod creation and scaling, guaranteeing that pods are created and deleted in a predictable sequence. This is crucial for maintaining data consistency and avoiding race conditions. Secondly, Petsets provide stable network identities, allowing other applications within the cluster to easily discover and communicate with the pods. Lastly, Petsets support rolling updates and automated recovery, minimizing downtime and ensuring high availability.

While Petsets bring simplicity to managing stateful applications, it's important to follow best practices for optimal usage. One key practice is to carefully plan and configure storage for your Petset pods, ensuring that you use appropriate volume types and storage classes. Additionally, monitoring and observability play a crucial role in identifying any issues with your Petsets and taking proactive actions.

Kubernetes Petsets have revolutionized the management of stateful applications within a Kubernetes cluster. With their unique features and benefits, Petsets enable developers to focus on building robust and scalable stateful applications, without the complexity of manual management. As Kubernetes continues to evolve, Petsets remain a valuable tool for simplifying the deployment and scaling of stateful workloads.

Matt Conran

Highlights: Kubernetes PetSets

Stateful Applications with Kubernetes PetSets

a) PetSets, introduced in Kubernetes version 1.3, provides a higher-level abstraction for managing stateful applications. Unlike traditional Kubernetes deployments, which focus on stateless workloads, PetSets offer features like stable network identities, ordered deployment, and automated scaling while considering the application’s stateful nature.

b) One key challenge in scaling stateful applications is ensuring stable network identities. PetSets addresses this by providing stable hostnames and domain names for each replica of the stateful application. This allows clients to consistently connect to the same replica, even when scaling or restarting instances.

c) PetSets enable ordered deployment and scaling of stateful applications. This is crucial when dealing with applications that rely on specific ordering or coordination between instances. With PetSets, you can define a startup order for your replicas, ensuring that each replica is fully operational before the next one starts.

Benefits of PetSets

PetSets offer several advantages over traditional Kubernetes deployments:

1. Stable Network Identity: PetSets assigns each pod a stable hostname and DNS identity, enabling seamless communication between pods and external services. This stability is essential for applications relying on peer-to-peer communication or distributed databases.

2. Ordered Deployment: PetSets ensure the ordered deployment and scaling of pods. This is particularly useful for applications where the order of creation or scaling matters, such as databases or distributed systems.

3. Persistent Volumes: PetSets facilitate the association of persistent volumes with pods, ensuring data durability and allowing applications to retain their state even if the pods are rescheduled.

4. Rolling Updates: PetSets supports rolling updates, enabling you to update your stateful applications without downtime. This process ensures that each pod is gracefully terminated and replaced by a new one with the updated configuration, minimizing service interruptions.

Understanding Kubernetes PetSet

A – ) Kubernetes PetSet, also known as StatefulSet in more recent versions, is designed to manage stateful applications that require stable network identities and persistent storage. Unlike traditional Kubernetes Deployments, PetSet ensures that each pod in a set has a unique and stable hostname, allowing applications to maintain their identity and communicate effectively with other components in the cluster.

B – ) PetSet offers several key features that make it a valuable tool for managing stateful applications. One of its primary benefits is the ability to automatically provision and manage persistent volumes for each pod in the set. This ensures data durability and allows applications to seamlessly recover from pod failures without losing critical data. Additionally, PetSet provides ordered pod creation and termination, allowing for smooth scaling and rolling updates while preserving the application’s state.

C – ) Kubernetes PetSet finds application in various scenarios where stateful workloads are involved. One common use case is running databases, such as MySQL or PostgreSQL, in a distributed fashion. PetSet ensures that each replica of the database has a stable and unique identity, enabling seamless replication and failover. Other use cases include running distributed file systems, message queues, and other stateful applications that require stable network identities and persistent storage.

D – ) To effectively utilize Kubernetes PetSet, it’s essential to follow some best practices. Firstly, carefully design your application to ensure it can handle pod failures and rescheduling. Leveraging persistent volumes and configuring appropriate storage classes is crucial for data durability and availability. Additionally, monitoring the health and performance of your PetSet pods and implementing proper scaling strategies will help optimize the overall performance of your stateful application.

PetSets Use Cases: –

PetSets are ideal for various stateful applications, including databases, distributed systems, and legacy applications that require stable network identities and persistent storage. Some everyday use cases for PetSets include:

1. Running a replicated database cluster, such as MySQL or PostgreSQL, where each pod corresponds to a separate database node.

2. Managing distributed messaging systems, like Apache Kafka or RabbitMQ, where each pod represents a separate broker or message queue.

3. Deploying legacy applications that rely on stable network identities and persistent storage, such as content management systems or enterprise resource planning software.

**Example: Detera and stateful applications**

In Kubernetes, persistent volumes are critical as customers migrate from stateless workloads to stateful applications. Pet Sets have significantly improved Kubernetes’ support for stateful applications like MySQL, Kafka, Cassandra, and Couchbase. It was possible to automate the scaling of the “Pets” (applications that need persistent placement and consistent handling) using sequencing provisioning and startup procedures.

The Role of FlexVolume:

Datera integrates seamlessly with Kubernetes using FlexVolume, an elastic block storage system for cloud deployments. Based on the first principles of containers, Datera decouples the provisioning of application resources from the underlying physical infrastructure. Clean contracts (i.e., not dependent on physical infrastructure), declarative formats, and declarative formats can eventually make stateful applications portable.

YAML Configurations:

With Datera, Kubernetes allows the underlying application infrastructure to be defined through YAML configurations, which are passed to the storage infrastructure. Datara AppTemplates can automate the scaling of stateful applications in a Kubernetes environment.

**The Role of Kubernetes**

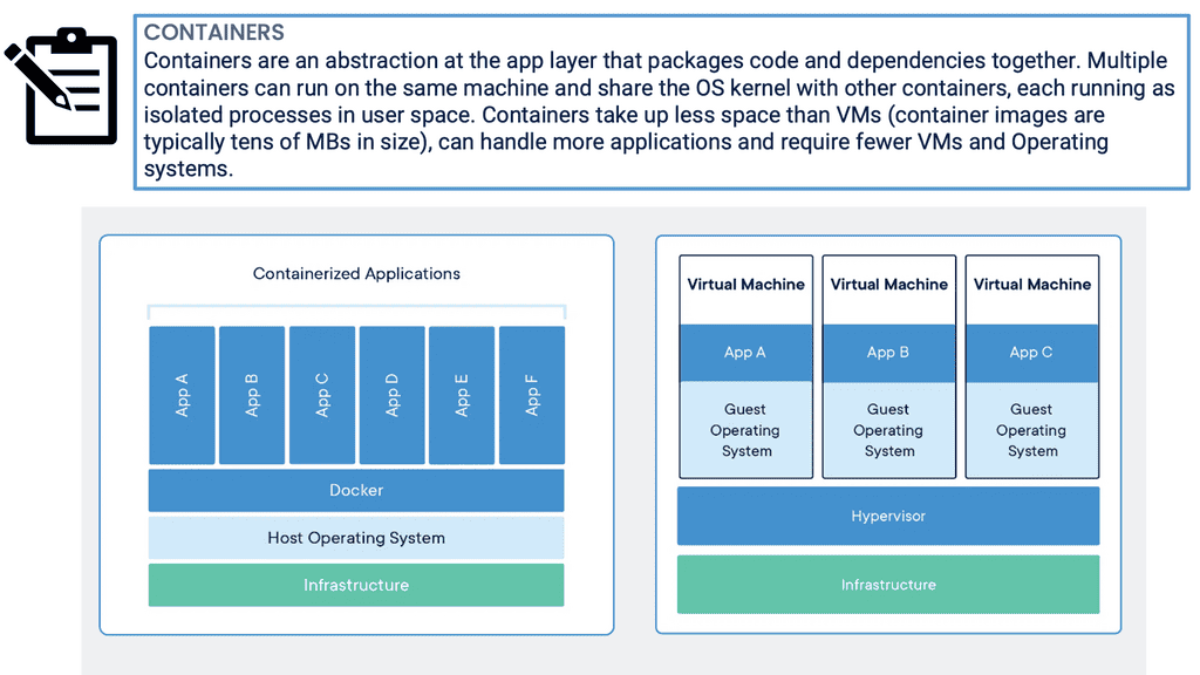

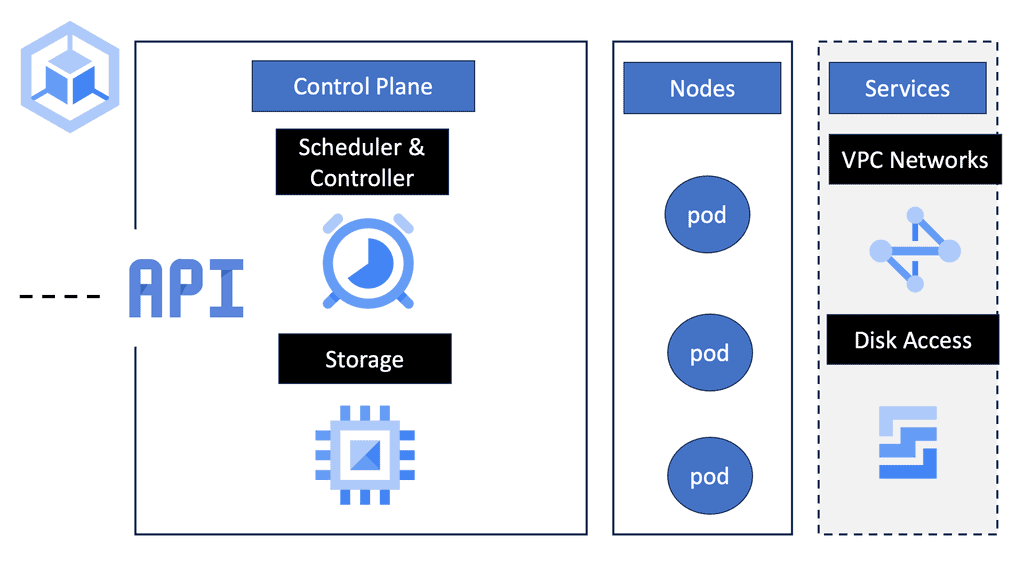

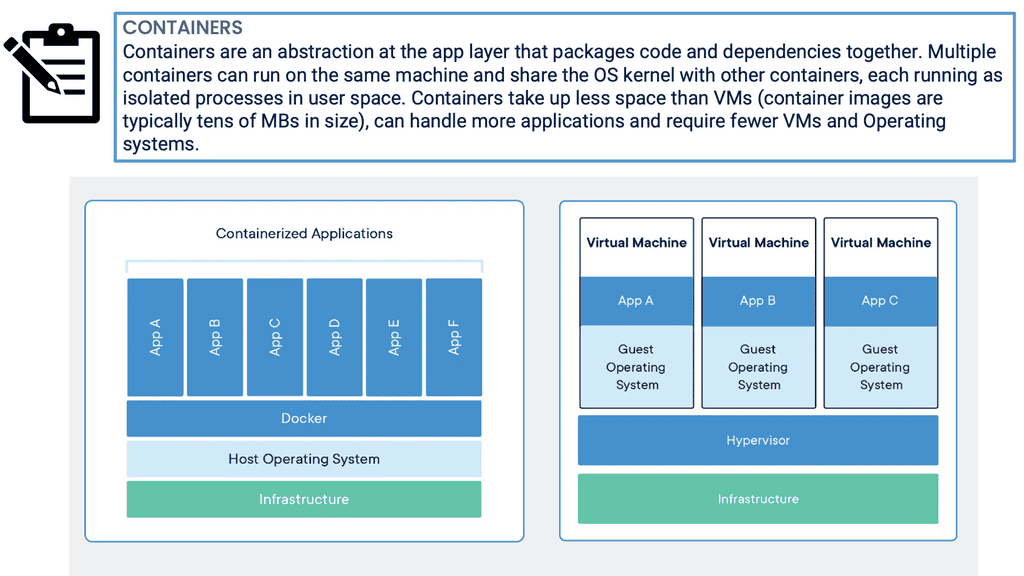

Kubernetes Pets and PetSets are core components of Kubernetes operations. Firstly, Kubernetes is a container orchestration platform that runs and manages containers. It changes the focus of container deployment to an application level, not the machine. The shift of focus point enables an abstraction level and the removal of dependencies between the application and its physical deployment.

**The Role of Decoupling**

This act of decoupling services from the details of low-level physical deployment enables better service management. For anything to scale, you need to provide some abstraction. For container networking, we have seen this in the overlay world with underlay and abstraction enabling networks to support millions of tenants.

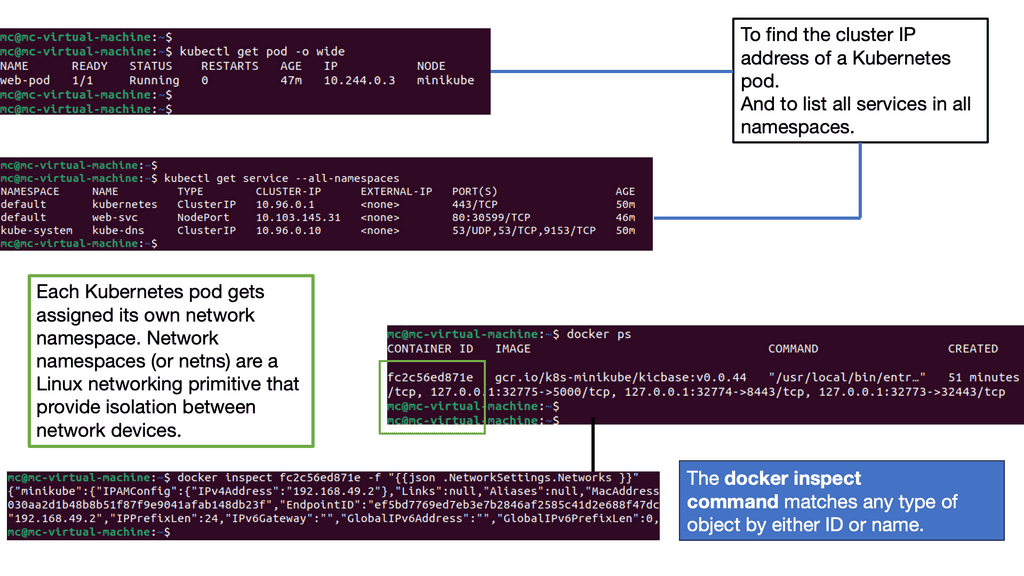

**Kubernetes Networking 101**

Kubernetes Networking 101 allows the deployment of applications to a “sea of abstracted computes,” enabling a self-healing orchestrated infrastructure. While this scaling and deployment have been helpful for stateless services, they fall short in the stateful world with the base Kubernetes distribution. Most of this has been solved today with Red Hat products, including OpenShift networking, which has several new network and security constructs that aid with stateful application support.

For pre-information, you may find the following useful

- SASE Model

- Chaos Engineering Kubernetes

- Kubernetes Security Best Practices

- OpenShift SDN

- Hands On Kubernetes

- Kubernetes Namespace

- Container Scheduler

Kubernetes PetSets

Discussing StatefulSets

Kubernetes started providing a resource to manage stateful workloads with the alpha release of PetSets in the 1.3 release. This capability has matured and is now known as StatefulSets. A StatefulSet has some similarities to a ReplicaSet in that it is responsible for managing the lifecycle of a set of Pods, but how it goes about this management has some noteworthy differences. PetSet might seem like an odd name for a Kubernetes resource, and it has since been replaced.

Still, it provides fascinating insights into the Kubernetes community’s thought process for supporting stateful workloads. The fundamental idea is that there are two ways of handling servers: to treat them as pets that require care, feeding, and nurturing or to treat them as cattle to which you don’t develop an attachment or provide much individual attention. If you’re logging into a server regularly to perform maintenance activities, you treat it as a pet.

**Contrasting Application Models**

As applications serve a growing user base around the globe, single cluster and data center solutions are no longer satisfying. Clustered applications and federations enable workloads to spread across multiple locations and container clusters for improved efficiency and scale. You will find contrasting deployment models when you examine the application types and scaling modes (for example, scale-up and scale-out clustering ).

There are substantial differences between deploying and running single applications to applications that operate within a cluster. Different application architectures require different deployment solutions and network identities. For example, a database node requires persistent volumes, or a node within a cluster uses specific elections where identity is essential.

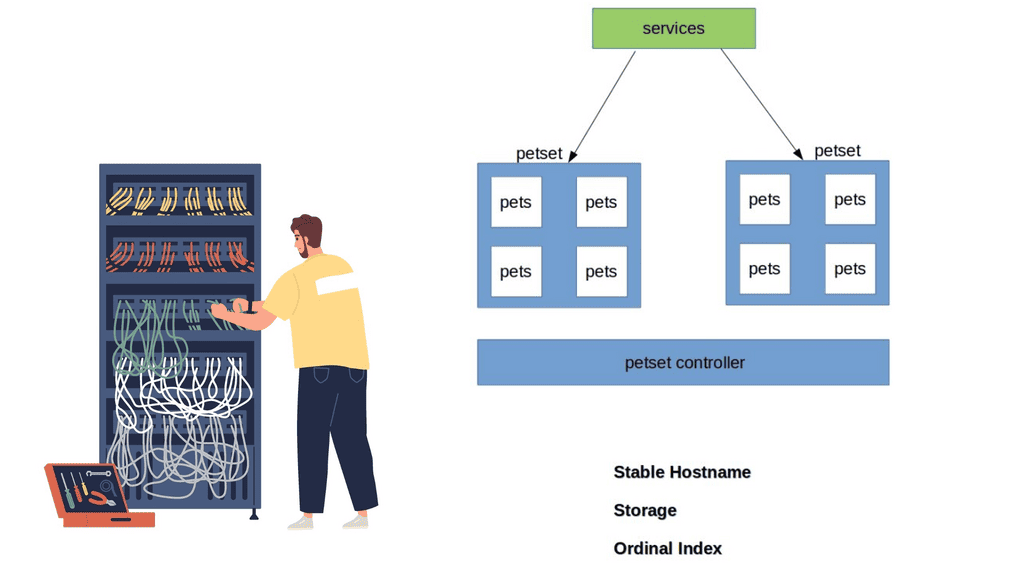

**Kubernetes Pets **

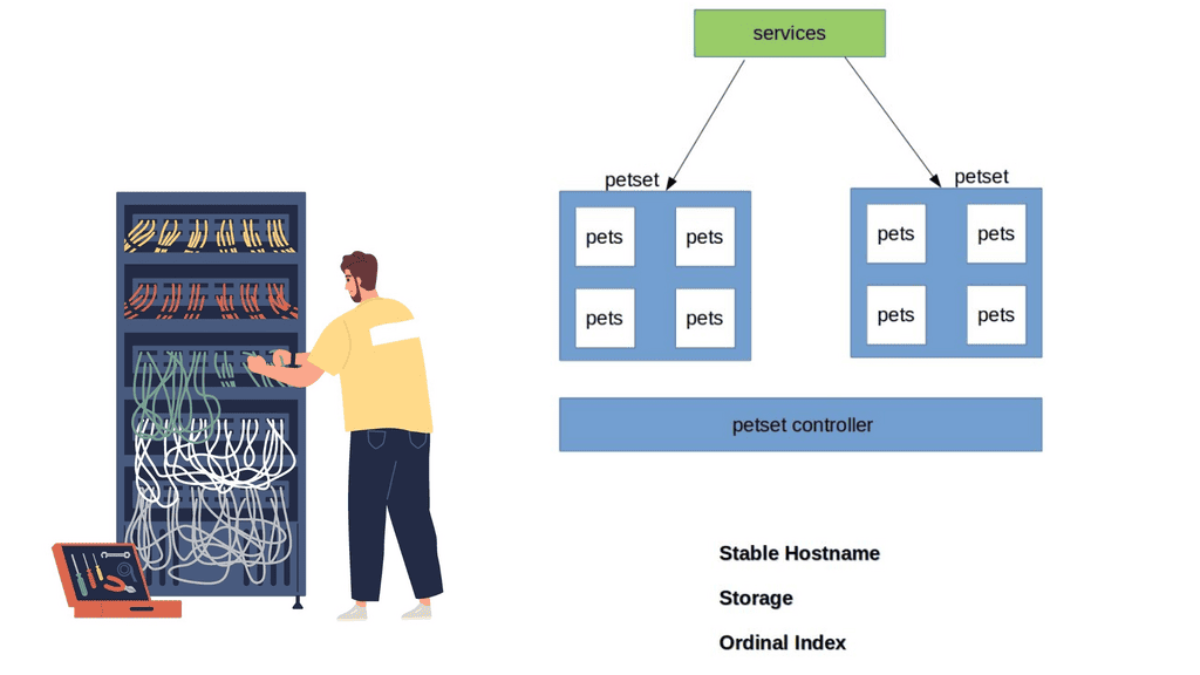

Kubernetes has recently ramped up by introducing a new Kubernetes object called PetSets. PetSets is geared towards improving stateful support and is currently an alpha resource in Kubernetes release 1.3. It holds a group of Kubernetes Pets, aka stateful applications that require stable hostnames, persistent disks, a structured lifecycle process, and group identity.

PetSets are used for non-homogenous instances where each POD has a stable distinguishable identity. A different identity is viewed in terms of stable network and storage.

A ) Stable network identities such as DNS and hostname.

B ) Stable storage identity.

Before PetSets, stateful applications were supported but exceedingly challenging to deploy and manage, especially regarding distributed stateful clusters. In addition, PODs had random names that could not be relied upon. With the introduction of PetSets controllers and Kubernetes Pets, Kubernetes has sharpened its support for stateful and distributed stateful applications.

Challenges: PODs and their shortcomings

Kubernetes enables the specification of applications as a POD file, expressed in YAML or JSON format. The file specifies what containers are to be in a POD. PODs are the smallest deployment unit in Kubernetes and present several challenges for some stateful services. They do not offer a singleton pattern and are temporary by design.

Their constructs are mortal as they are born and die but never resurrected. When a POD dies, it’s permanently gone and replaced with a new instance and fresh identity. This operation model may suit some applications but falls short for others who want to retain identity and storage across restart / reschedule.

Challenges: Replication Controllers and their shortcomings

If you want a POD to resurrect, you need a Replication Controller ( RC ). The RC enables a singleton pattern to set replication patterns to a specific number of PODs.

The introduction of the RC is undoubtedly a step in the right direction, as it ensures the correct number of replicas are always running at any given time. The RC works alongside services before the RC, using labels to map inbound requests to certain PODs.

Services provide a level of abstraction so that the application endpoint never changes. RC is suitable for application deployments requiring weak uncoupled identities, and when naming individual PODs doesn’t matter to the application architecture.

However, they lack certain functionalities that the new PetSet controller provides. So you could say that a PetSet controller is an enhanced RC controller in shiny new clothes.

A key point: “Pets and Cattle.”

The best way to understand Kubernetes Pets and PetSets is to perceive the cloud infrastructure with the “Pets and Cattle” metaphor. A“Pet” is a special snowflake you have emotional ties towards and requires special handling, for example, when it’s sick, unlike “Cattle,” which is viewed as an easily replaceable commodity.

Cattle are similar enough to each other that you can treat them all as equals. Therefore, the application does not suffer much if a cattle dies or needs replacement.

- Cattle refers to stateless applications, and Pets refer to stateful, “build once, run anywhere” applications.

Discussing Stateless applications

A stateless application takes in a request and responds, but nothing is left behind to fulfill subsequent connections. The stateless pattern derives from another independent system’s ability to satisfy subsequent requests/responses. Stateful applications store data for further use. These types of applications can be inspected with a stateful inspection firewall.

Note: PetSet Objects

Stateful applications are grouped into what’s known as a PetSet object. The PetSet controller has a family-orientated approach, as opposed to the traditional RC, which is mainly concerned with the number of replicas. PODs are stateless disposable units that can be removed and interchanged without affecting the application. The Pets, conversely, are groups of stateful PODs requiring stronger different identities.

Note: PetSet Identities

Within a PetSet, Pets ( stateful applications ) have a unique, distinguishable identity. The identities stick and do not change when restarting/rescheduling. They have an explicit purpose/role in life that is known throughout the family—a definitive startup carried out in a structured order that fits within its responsibility in the application’s framework.

Initially, the cattle approach forced us to view cloud components as anonymous resources. However, this approach does not fit all application requirements. Stateful applications require us to rethink the new pet-style approach.

Workloads and Application Types Suitable for Pets:

Stateful application within a PetSet object requires unique identities such as :

- Storage

- Ordinal index

- Stable Hostname

The PetSet object supports clustered applications that require stricter membership and identity requirements, such as :

- Discovery of peers for quorum

- Startup and Teardown

Workloads that benefit from PetSets include, for example :

- NoSQL databases – clustered software like Cassandra, Zookeeper, etcd, requiring regular membership.

- Relationshional Databases – MySQL or PostgreSQL requiring persistent volumes.

Application Roles & Responsibilities

Applications have different roles and responsibilities, requiring different deployment models. For example, a Cassandra cluster has strict membership and identity requirements; specific nodes are designated seed nodes used during startup to discover the cluster.

They come first and act as the contact points for all other nodes to get information about the cluster. All nodes require one seed node, and all nodes within a cluster must have the same seed node. No node can join the cluster without a seed node, meaning their role is vital for the application framework.

Note: Zookeeper – Identification of Peers

Zookeeper or etcd requires the identification of peers and instances clients should contact. Other databases have a master/slave model where the master has unidirectional control over the slave. The “primary” server has a different role and identity requirements than the “slave.” Properly running these types of services requires more complex features in Kubernetes.

Closing Points on Kubernetes PetSets

PetSets, now more commonly known as StatefulSets, are a Kubernetes resource designed to manage stateful applications. Unlike the standard ReplicaSets that manage stateless applications by treating all replicas as identical, PetSets offer a more sophisticated way to manage pods. Each pod in a PetSet has a guaranteed unique identity, stable networking, and persistent storage, making them ideal for databases, caches, and other applications that require stable identities.

One of the standout features of PetSets is their ability to maintain a stable identity for each pod, which is crucial for stateful applications. This includes:

– **Persistent Storage:** Each pod in a PetSet can have its own persistent volume, ensuring that data is retained across restarts.

– **Ordered Deployment and Scaling:** PetSets ensure that pods are deployed or scaled up in a specific order, which is essential for applications with interdependencies.

– **Stable Network Identity:** Each pod retains its network identity, which is important for applications that rely on consistent access to resources.

Deploying a stateful application using PetSets involves defining a StatefulSet resource in your Kubernetes cluster. This resource specifies the desired number of replicas, the template for the pods, and any associated persistent volumes. By following best practices in your configurations, you can ensure that your stateful applications benefit from the stability and reliability that PetSets offer.

PetSets are particularly beneficial for applications like databases (e.g., MySQL, Cassandra), distributed file systems (e.g., HDFS), and other systems that require stable identities and persistent storage. By utilizing PetSets, organizations can ensure high availability and resilience for their critical stateful applications, even in dynamic environments.

Summary: Kubernetes PetSets

Kubernetes has revolutionized container orchestration, enabling developers to manage and scale their applications efficiently. Among its many features, Kubernetes PetSets offers a powerful way to manage stateful applications. In this blog post, we delved into the intricacies of Kubernetes PetSets, exploring their functionality, use cases, and best practices.

Understanding PetSets

PetSets, introduced in Kubernetes 1.3, are a higher-level abstraction built on top of StatefulSets. They provide a way to deploy and manage stateful applications in a Kubernetes cluster. PetSets allow you to assign stable hostnames and persistent storage to each pod replica, making them ideal for applications requiring unique identities and data persistence.

Use Cases for PetSets

PetSets are particularly beneficial for applications like databases, message queues, and distributed file systems, which require stable network identities and persistent storage. Using PetSets, you can ensure that each replica of your stateful application has its unique hostname and storage, enabling seamless scaling and failover.

How to Create a PetSet

Creating a PetSet involves defining a template for the pods that make up the replica set. This template specifies attributes such as the container image, resource requirements, and volume claims. Additionally, you can define the ordering and readiness requirements for the pods within the PetSet. We’ll walk you through the step-by-step process of creating a PetSet and highlight important considerations.

Scaling and Updating PetSets

One of PetSets’ key advantages is its ability to scale and update stateful applications seamlessly. We’ll explore the different scaling strategies, including vertical and horizontal scaling, and discuss how to handle rolling updates without compromising your PetSet’s availability and data integrity.

Monitoring and Troubleshooting PetSets

Monitoring and troubleshooting are crucial for managing any application in a production environment. We’ll cover the best practices for monitoring the health and performance of your PetSets and standard troubleshooting techniques to help you identify and resolve issues quickly.

Conclusion:

Kubernetes PetSets provides a powerful solution for managing stateful applications in a Kubernetes cluster. By combining stable hostnames and persistent storage with the benefits of container orchestration, PetSets offers a reliable and scalable approach to deploying and scaling your stateful workloads. Understanding the nuances of PetSets and following best practices will empower you to harness the full potential of Kubernetes for your applications.