SDP Network

The world of networking has undergone a significant transformation with the advent of Software-Defined Perimeter (SDP) networks. These innovative networks have revolutionized connectivity by providing enhanced security, flexibility, and scalability. In this blog post, we will explore the key features and benefits of SDP networks, their impact on traditional networking models, and the future potential they hold.

SDP networks, also known as "Black Clouds," are a paradigm shift in how we approach network security. Unlike traditional networks that rely on perimeter-based security, SDP networks adopt a "Zero Trust" model. This means that every user and device is treated as untrusted until verified, reducing the attack surface and enhancing security.

<br one="" of="" the="" standout="" features="" sdp="" networks="" is="" their="" ability="" to="" provide="" granular="" access="" control.="" by="" authenticating="" users="" and="" devices="" before="" granting="" access,="" organizations="" can="" ensure="" that="" only="" authorized="" entities="" connect="" network.="" this="" eliminates="" risk="" unauthorized="" minimizes="" potential="" for="" data="" breaches.

Another benefit of SDP networks is their flexibility. These networks are not tied to physical locations, allowing users to securely connect from anywhere in the world. This is especially beneficial for remote workers, as it enables them to access critical resources without compromising security.

SDP networks challenge the traditional hub-and-spoke networking model by introducing a decentralized approach. Instead of relying on a central point of entry, SDP networks establish direct connections between users and resources. This reduces latency, improves performance, and enhances the overall user experience.

As technology continues to evolve, the future of SDP networks looks promising. The rise of Internet of Things (IoT) devices and the increasing reliance on cloud-based services necessitate a more secure and scalable networking solution. SDP networks offer precisely that, with their ability to adapt to changing network demands and provide robust security measures.

SDP networks have emerged as a game-changer in the world of connectivity. By focusing on security, flexibility, and scalability, they address the limitations of traditional networking models. As organizations strive to protect their valuable data and adapt to evolving technological landscapes, SDP networks offer a reliable and future-proof solution.

Matt Conran

Highlights: SDP Network

**The Core Principles of SDP Networks**

At the heart of an SDP network are three core principles: identity-based access, dynamic provisioning, and the principle of least privilege. Identity-based access ensures that only authenticated users can access the network, a significant shift from traditional models that rely on IP addresses. Dynamic provisioning allows the network to adapt in real-time, creating secure connections only when necessary, thus reducing the attack surface. Lastly, the principle of least privilege ensures that users receive only the access necessary to perform their tasks, minimizing potential security risks.

**How SDP Networks Work**

SDP networks function by utilizing a multi-stage process to verify user identity and device health before granting access. The process begins with an initial trust assessment where users are authenticated through a secure channel. Once authenticated, the user’s device undergoes a health check to ensure it meets security requirements. Following this, access is granted on a need-to-know basis, with micro-segmentation techniques used to isolate resources and prevent lateral movement within the network. This layered approach significantly enhances network security by ensuring that only verified users gain access to the resources they need.

Black Clouds – SDP

SDP networks, also known as ” Black Clouds,” represent a paradigm shift in network security. Unlike traditional perimeter-based security models, SDP networks focus on dynamically creating individualized perimeters around each user, device, or application. By adopting a Zero-Trust approach, SDP networks ensure that only authorized entities can access resources, reducing the attack surface and enhancing overall security.

SDP networks are a paradigm shift in network security. Unlike traditional perimeter-based approaches, SDP networks adopt a zero-trust model, where every user and device must be authenticated and authorized before accessing resources. This eliminates the vulnerabilities of a static perimeter and ensures secure access from anywhere.

Benefits of Software-Defined Perimeter:

1. Enhanced Security: SDP provides an additional layer of security by ensuring that only authenticated and authorized users can access the network. By implementing granular access controls, SDP reduces the attack surface and minimizes the risk of unauthorized access, making it significantly harder for cybercriminals to breach the system.

2. Improved Flexibility: Traditional network architectures often struggle to accommodate the increasing number of devices and the demand for remote access. SDP enables businesses to scale their network infrastructure effortlessly, allowing seamless connectivity for employees, partners, and customers, regardless of location. This flexibility is precious in today’s remote work environment.

3. Simplified Network Management: SDP simplifies network management by centralizing access control policies. This centralized approach reduces complexity and streamlines granting and revoking access privileges. Additionally, SDP eliminates the need for VPNs and complex firewall rules, making network management more efficient and cost-effective.

4. Mitigated DDoS Attacks: Distributed Denial of Service (DDoS) attacks can cripple an organization’s network infrastructure, leading to significant downtime and financial losses. SDP mitigates the impact of DDoS attacks by dynamically rerouting traffic and preventing the attack from overwhelming the network. This proactive defense mechanism ensures that network resources remain available and accessible to legitimate users.

5. Compliance and Regulatory Requirements: Many industries are bound by strict regulatory requirements, such as healthcare (HIPAA) or finance (PCI-DSS). SDP helps organizations meet these requirements by providing a secure framework that ensures data privacy and protection. Implementing SDP can significantly simplify the compliance process and reduce the risk of non-compliance penalties.

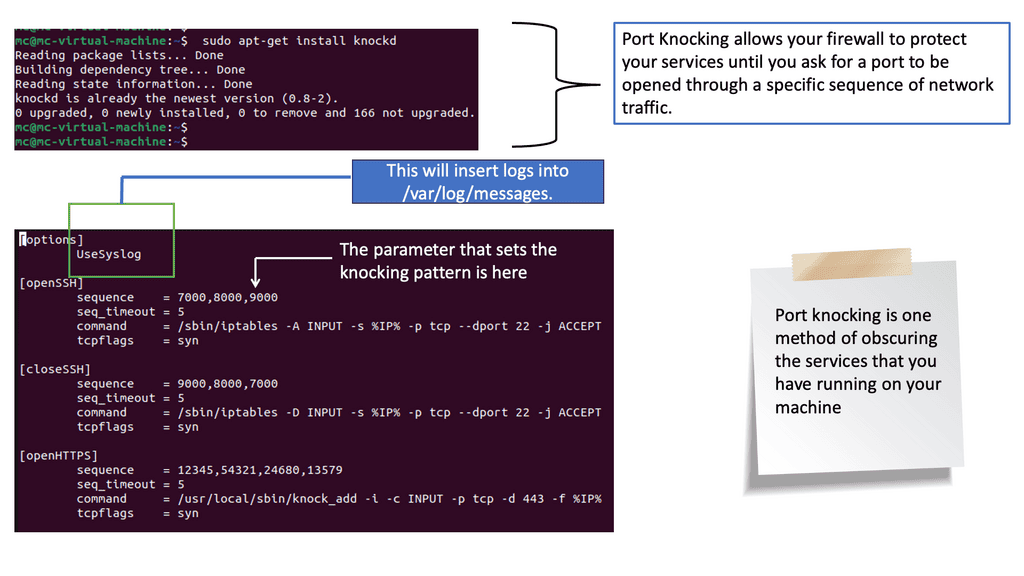

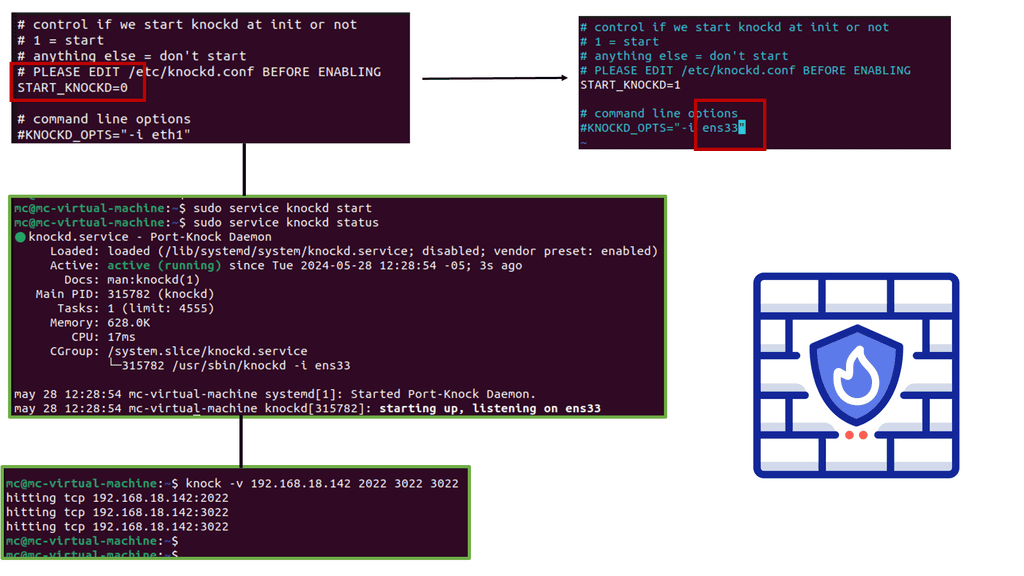

Example: Understanding Port Knocking

Port knocking is a technique in which a sequence of connection attempts is made to specific ports on a remote system. In a predetermined order, these attempts serve as a secret “knock” that triggers the opening of a closed port. Port knocking acts as a virtual doorbell, allowing authorized users to access a system that would otherwise remain invisible and protected from potential threats.

The Process: Port Knocking

To delve deeper, let’s explore how port knocking works. When a connection attempt is made to a closed port, the firewall silently drops it, leaving no trace of the effort. However, when the correct sequence of connection attempts is made, the firewall recognizes the pattern and dynamically opens the desired port, granting access to the authorized user. This sequence can consist of connections to multiple ports, further enhancing the system’s security.

**Understand your flows**

Network flows are time-bound communications between two systems. A single flow can be directly mapped to an entire conversation using a bidirectional transport protocol, such as TCP. However, a single flow for unidirectional transport protocols (e.g., UDP) might capture only half of a network conversation. Without a deep understanding of the application data, an observer on the network may not associate two UDP flows logically.

A system must capture all flow activity in an existing production network to move to a zero-trust model. The new security model should consider logging flows in a network over a long period to discover what network connections exist. Moving to a zero-trust model without this up-front information gathering will lead to frequent network communication issues, making the project appear invasive and disruptive.

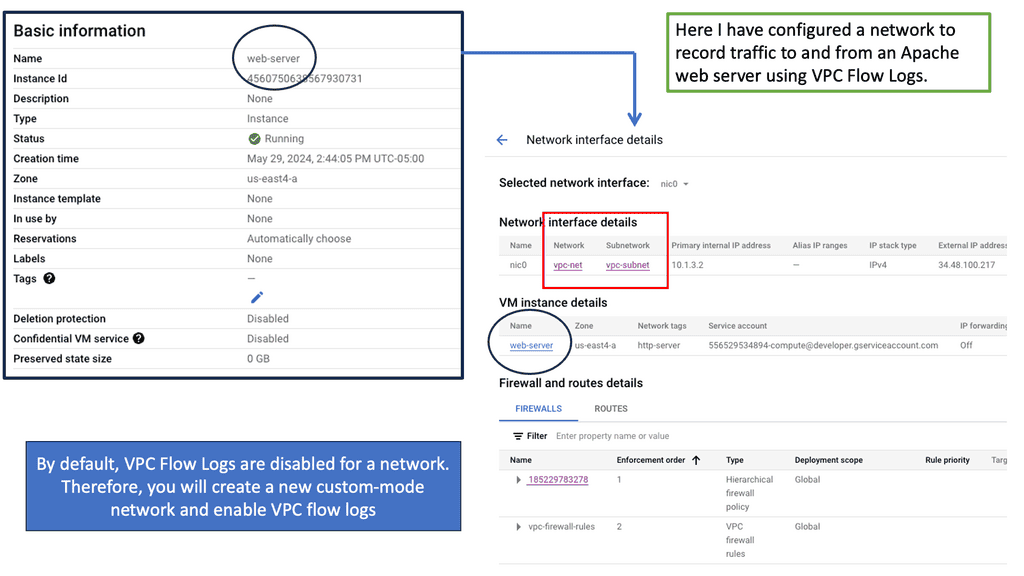

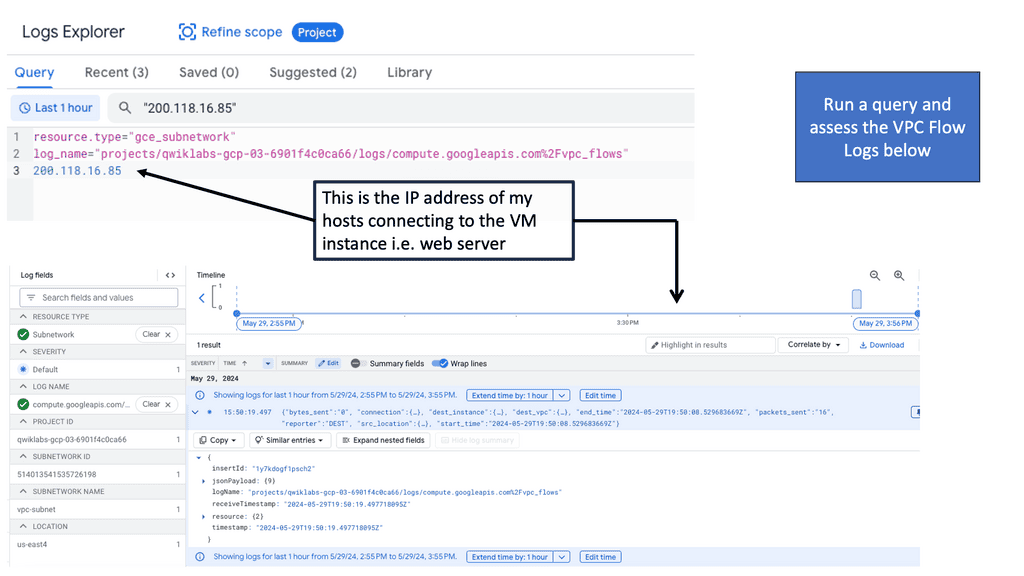

Example: VPC Flow Logs

### What are VPC Flow Logs?

VPC Flow Logs are a feature in Google Cloud that captures information about the IP traffic going to and from network interfaces in your VPC. These logs offer detailed insights into network activity, helping you to identify potential security risks, troubleshoot network issues, and analyze the impact of network traffic on your applications.

### How VPC Flow Logs Work

When you enable VPC Flow Logs, Google Cloud begins collecting data about each network flow, including source and destination IP addresses, protocols, ports, and byte counts. This data is then stored in Google Cloud Storage, BigQuery, or Pub/Sub, depending on your configuration. You can use this data for real-time monitoring or historical analysis, providing a comprehensive view of your network’s behavior.

### Benefits of Using VPC Flow Logs

1. **Enhanced Security**: By monitoring network traffic, VPC Flow Logs help you detect suspicious activity and potential security threats, enabling you to take proactive measures to protect your infrastructure.

2. **Troubleshooting and Performance Optimization**: With detailed traffic data, you can easily identify bottlenecks or misconfigurations in your network, allowing you to optimize performance and ensure seamless operations.

3. **Cost Management**: Understanding your network traffic patterns can help you manage and predict costs associated with data transfer, ensuring you stay within budget.

4. **Compliance and Auditing**: VPC Flow Logs provide a valuable record of network activity, assisting in compliance with industry regulations and internal auditing requirements.

### Getting Started with VPC Flow Logs on Google Cloud

To start using VPC Flow Logs, you’ll need to enable them in your Google Cloud project. This process involves configuring the logging settings for your VPC, selecting the desired storage destination for the logs, and setting any filters to narrow down the data collected. Google Cloud provides detailed documentation to guide you through each step, ensuring a smooth setup process.

**Creating a software-defined perimeter**

With a software-defined perimeter (SDP) architecture, networks are logically air-gapped, dynamically provisioned, on-demand, and isolated from unprotected networks. An SDP system enhances security by requiring authentication and authorization before users or devices can access assets concealed by the SDP system. Additionally, by mandating connection pre-vetting, SDP will restrict all connections into the trusted zone based on who may connect, from those devices to what services, infrastructure, and other factors.

Zero Trust – Google Cloud Data Centers

**The Essence of Zero Trust Network Design**

Before delving into VPC Service Controls, it’s essential to grasp the concept of zero trust network design. Unlike traditional security models that rely heavily on perimeter defenses, zero trust operates on the principle that threats can exist both outside and inside your network. This model requires strict verification for every device, user, and application attempting to access resources. By adopting a zero trust approach, organizations can minimize the risk of security breaches and ensure that sensitive data remains protected.

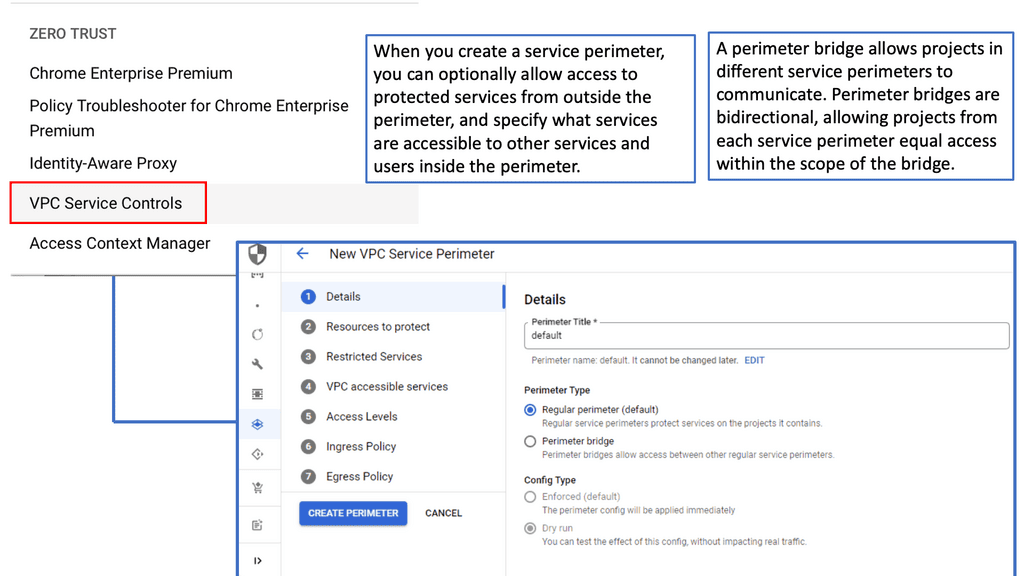

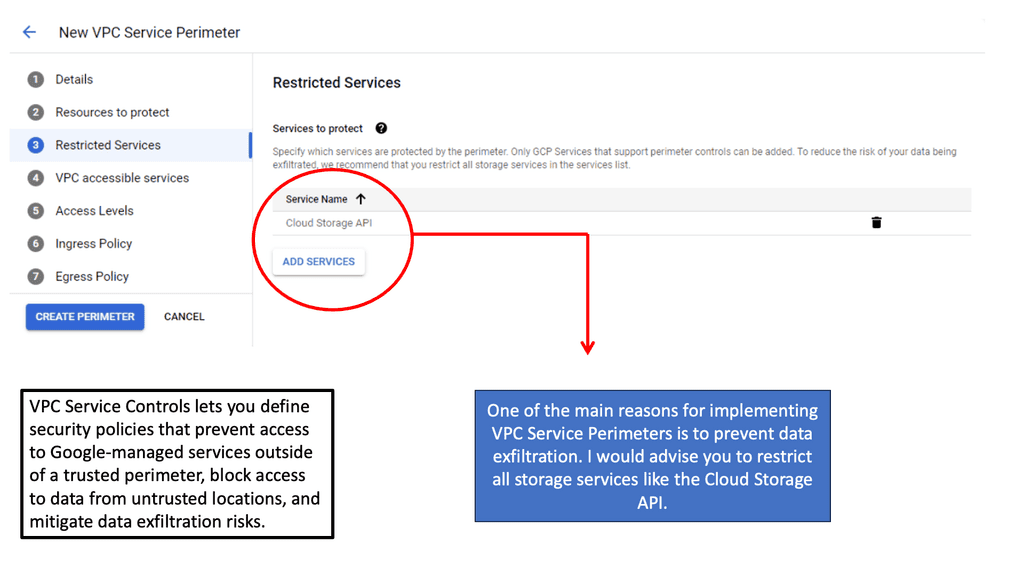

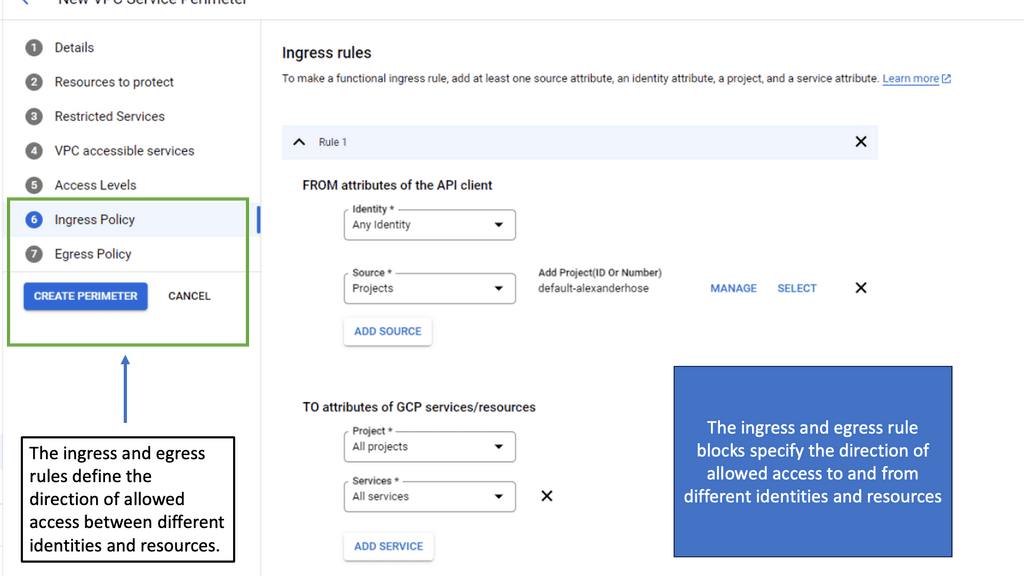

**How VPC Service Controls Enhance Security**

VPC Service Controls are a critical component of Google Cloud’s security offerings, designed to bolster the protection of your cloud resources. They enable enterprises to define a security perimeter around their services, preventing data exfiltration and unauthorized access. With VPC Service Controls, you can:

– Create service perimeters to restrict access to specific Google Cloud services.

– Define access levels based on IP addresses and device attributes.

– Implement policies that prevent data from being transferred to unauthorized networks.

These controls provide an additional layer of security, ensuring that your cloud infrastructure adheres to the zero trust principles.

Creating a Zero Trust Environment

Software-defined perimeter is a security framework that shifts the focus from traditional perimeter-based network security to a more dynamic and user-centric approach. Instead of relying on a fixed network boundary, SDP creates a “Zero Trust” environment, where users and devices are authenticated and authorized individually before accessing network resources. This approach ensures that only trusted entities gain access to sensitive data, regardless of their location or network connection.

Implementing SDP Networks:

Implementing SDP networks requires careful planning and execution. The first step is to assess the existing network infrastructure and identify critical assets and access requirements. Next, organizations must select a suitable SDP solution and integrate it into their network architecture. This involves deploying SDP controllers, gateways, and agents and configuring policies to enforce access control. It is crucial to involve all stakeholders and conduct thorough testing to ensure a seamless deployment.

Zero trust framework:

The zero-trust framework for networking and security is here for a good reason. There are various bad actors, ranging from opportunistic and targeted to state-level, and all are well prepared to find ways to penetrate a hybrid network. As a result, there is now a compelling reason to implement the zero-trust model for networking and security.

SDP network brings SDP security, also known as software defined perimeter, which is heavily promoted as a replacement for the virtual private network (VPN) and, in some cases, firewalls for ease of use and end-user experience.

Dynamic tunnel of 1:

It also provides a solid SDP security framework utilizing a dynamic tunnel of 1 per app per user. This offers security at the segmentation of a micro level, providing a secure enclave for entities requesting network resources. These are micro-perimeters and zero-trust networks that can be hardened with technology such as SSL security and single packet authorization.

For pre-information, you may find the following useful:

SDP Network

A software-defined perimeter is a security approach that controls resource access and forms a virtual boundary around networked resources. Think of an SDP network as a 1-to-1 mapping, unlike a VLAN, which can have many hosts within, all of which could be of different security levels.

Also, with an SDP network, we create a security perimeter via software versus hardware; an SDP can hide an organization’s infrastructure from outsiders, regardless of location. Now, we have a security architecture that is location-agnostic. As a result, employing SDP architectures will decrease the attack surface and mitigate internal and external network bad actors. The SDP framework is based on the U.S. Department of Defense’s Defense Information Systems Agency’s (DISA) need-to-know model from 2007.

Feature 1: Dynamic Access Control

One of the primary features of SDP is its ability to dynamically control access to network resources. Unlike traditional perimeter-based security models, which grant access based on static rules or IP addresses, SDP employs a more granular approach. It leverages context-awareness and user identity to dynamically allocate access rights, ensuring only authorized users can access specific resources. This feature eliminates the risk of unauthorized access, making SDP an ideal solution for securing sensitive data and critical infrastructure.

Feature 2: Zero Trust Architecture

SDP embraces zero-trust, a security paradigm that assumes no user or device can be trusted by default, regardless of their location within the network. With SDP, every request to access network resources is subject to authentication and authorization, regardless of whether the user is inside or outside the corporate network. By adopting a zero-trust architecture, SDP eliminates the concept of a network perimeter and provides a more robust defense against internal and external threats.

Feature 3: Application Layer Protection

Traditional security solutions often focus on securing the network perimeter, leaving application layers vulnerable to targeted attacks. SDP addresses this limitation by incorporating application layer protection as a core feature. By creating micro-segmented access controls at the application level, SDP ensures that only authenticated and authorized users can interact with specific applications or services. This approach significantly reduces the attack surface and enhances the overall security posture.

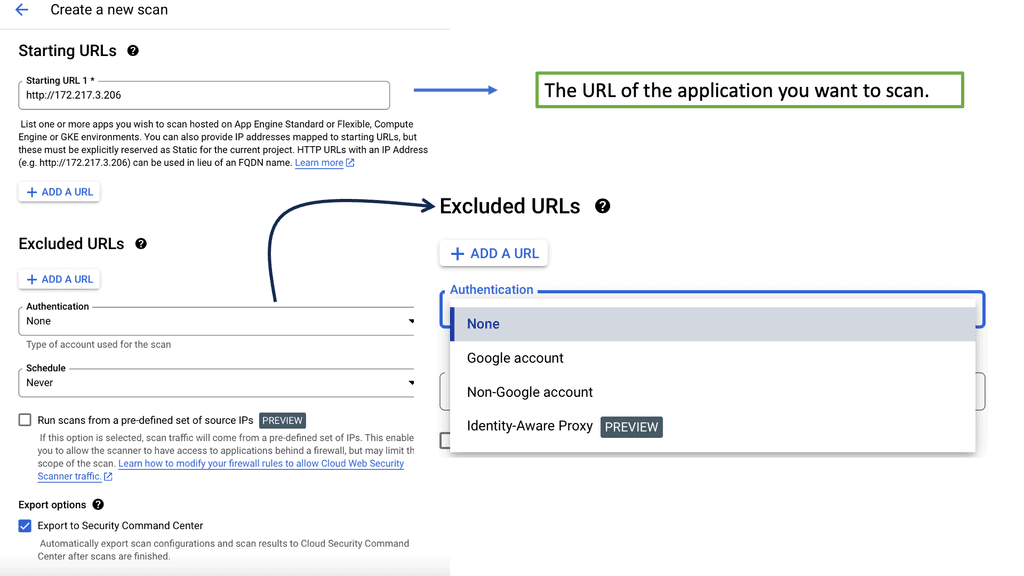

Example Technology: Web Security Scanner

**How Web Security Scanners Work**

Web security scanners function by crawling through web applications and testing for known vulnerabilities. They analyze various components, such as forms, cookies, and headers, to identify potential security flaws. By simulating attacks, these scanners provide insights into how a malicious actor might exploit your web application. This information is crucial for developers to patch vulnerabilities before they can be exploited, thus fortifying your web defenses.

Feature 4: Scalability and Flexibility

SDP offers scalability and flexibility to accommodate the dynamic nature of modern business environments. Whether an organization needs to provide secure access to a handful of users or thousands of employees, SDP can scale accordingly. Additionally, SDP seamlessly integrates with existing infrastructure, allowing businesses to leverage their current investments without needing a complete overhaul. This adaptability makes SDP a cost-effective solution with a low barrier to entry.

**SDP Security**

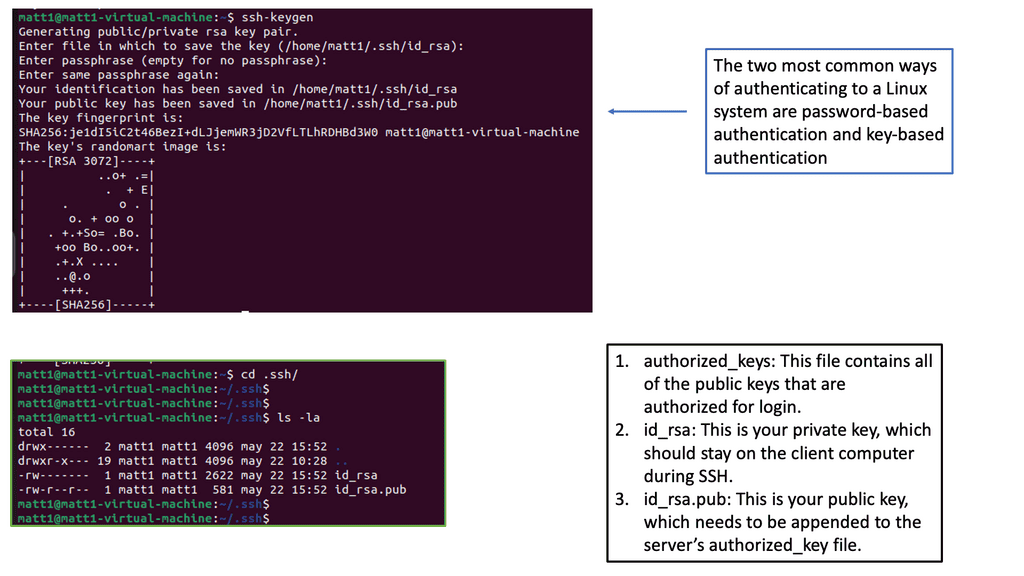

Authentication and Authorization

So, how can one authenticate and authorize themselves when creating an SDP network and SDP security?

First, trust is the main element within an SDP network. Therefore, mechanisms that can associate themselves with authentication and authorization to trust at a device, user, or application level are necessary for zero-trust environments.

When something presents itself to a zero-trust network, it must go through several SDP security stages before access is granted. The entire network is dark, meaning that resources drop all incoming traffic by default, providing an extremely secure posture. Based on this simple premise, a more secure, robust, and dynamic network of geographically dispersed services and clients can be created.

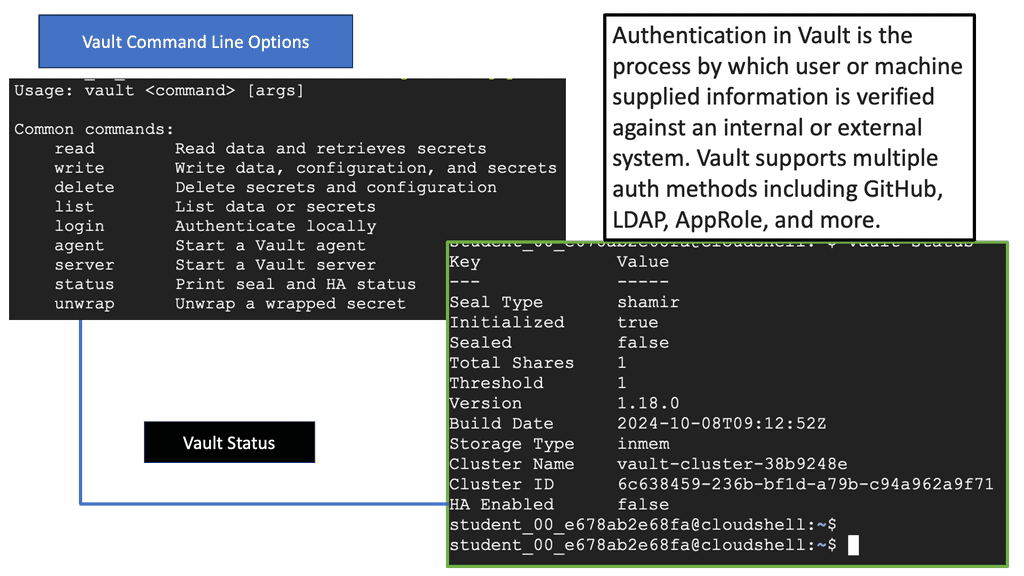

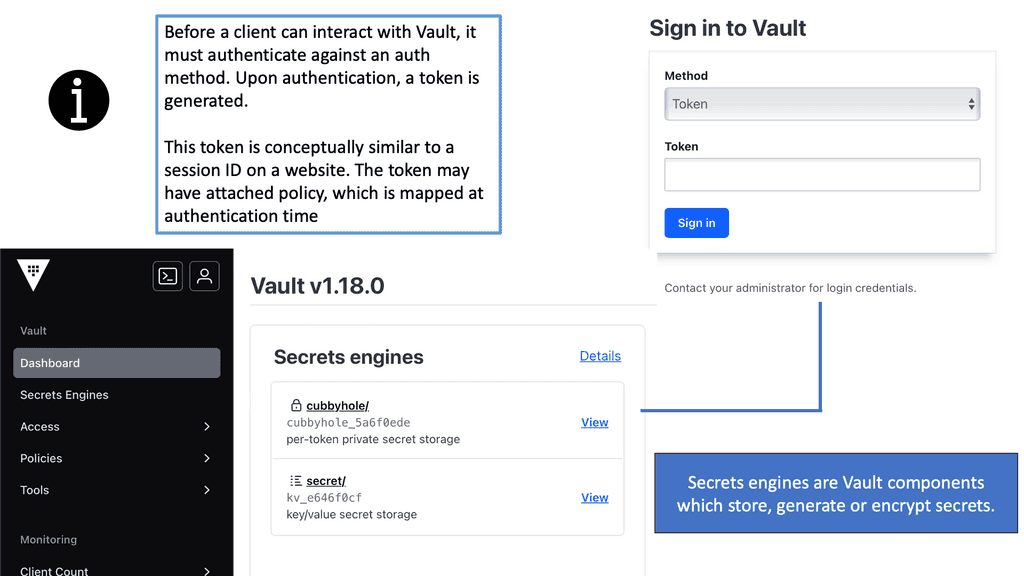

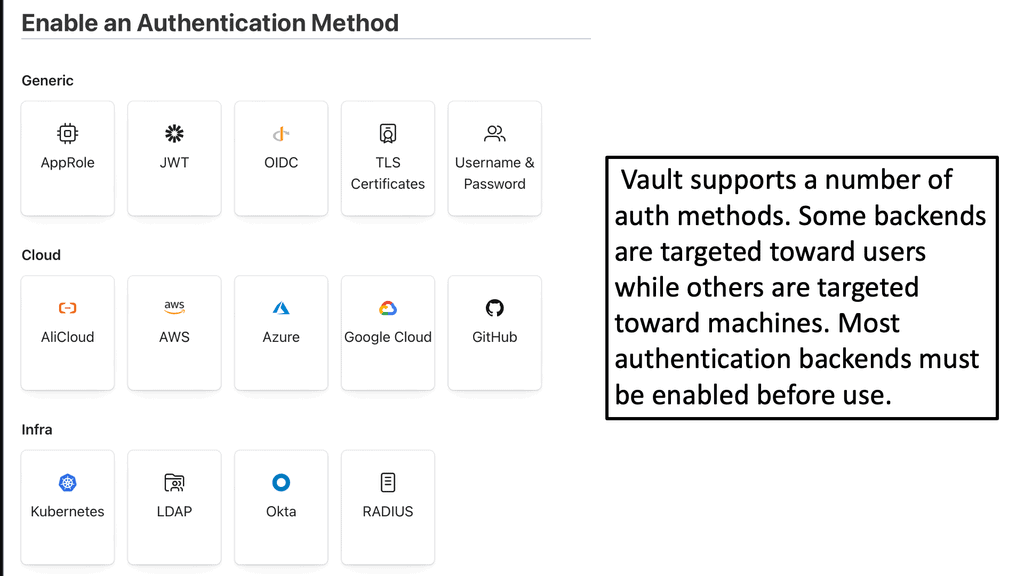

Example: Authentication with Vault

### Understanding Authentication Methods

Vault offers a variety of authentication methods, allowing it to integrate seamlessly into diverse environments. These methods determine how users and applications prove their identity to Vault before gaining access to secrets. Some of the most common methods include:

– **Token Authentication**: The simplest form of authentication, where tokens are used as a bearer of identity. Tokens can be created with specific policies that define what actions can be performed.

– **AppRole Authentication**: This method is designed for applications and automated processes. It uses a role-based approach to issue secrets, providing enhanced security through role IDs and secret IDs.

– **LDAP Authentication**: Ideal for organizations already using LDAP directories, this method allows users to authenticate using their existing LDAP credentials, streamlining the authentication process.

– **OIDC and OAuth2**: These methods support single sign-on (SSO) capabilities, integrating with identity providers to authenticate users based on their existing identities.

Understanding these methods is crucial for configuring Vault in a way that best suits your organization’s security needs.

### Implementing Secure Access Control

Once you’ve chosen the appropriate authentication method, the next step is to implement secure access control. Vault uses policies to define what authenticated users and applications can do. These policies are written in a domain-specific language (DSL) and can be as fine-grained as required. For instance, you might create a policy that allows a specific application to read certain secrets but not modify them.

By leveraging Vault’s policy framework, organizations can ensure that only authorized entities have access to sensitive data, significantly reducing the risk of unauthorized access.

### Automating Secrets Management

One of Vault’s standout features is its ability to automate secrets management. Traditional secrets management involves manually rotating keys and credentials, a process that’s not only labor-intensive but also prone to human error. Vault automates this process, dynamically generating and rotating secrets as needed. This automation not only enhances security but also frees up valuable time for IT teams to focus on other critical tasks.

For example, Vault can dynamically generate database credentials for applications, ensuring that they always have access to valid and secure credentials without manual intervention.

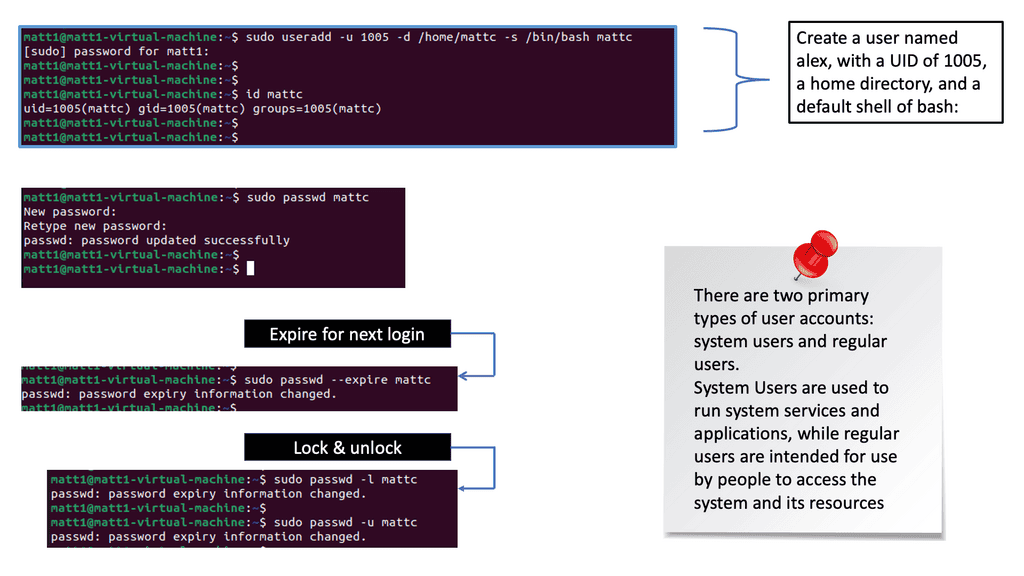

- A key point: The difference between Authentication and Authorization.

Before we go any further, it’s essential to understand the difference between authentication and authorization. In the zero-trust world, upon examining an end host, a device, and a user from an agent. Device and user authentication are carried out first before agent formation. The user will authenticate the device first and then against the agent. Authentication confirms your identity, while authorization grants access to the system.

**The consensus among SDP network vendors**

Generally, with most zero-trust and SDP VPN network vendors, the agent is only formed once valid device and user authentication has been carried out. The authentication methods used to validate the device and user can be separate. A device that needs to identify itself to the network can be authenticated with X.509 certificates.

A user can be authenticated by other means, such as a setting from an LDAP server if the zero-trust solution has that as an integration point. The authentication methods between the device and users don’t have to be tightly coupled, providing flexibility.

SDP Security with SDP Network: X.509 certificates

IP addresses are used for connectivity, not authentication, and don’t have any fields to implement authentication. The authentication must be handled higher up the stack. So, we need to use something else to define identity, and that would be the use of certificates. X.509 certificates are a digital certificate standard that allows identity to be verified through a chain of trust and is commonly used to secure device authentication. X.509 certificates can carry a wealth of information within the standard fields that can fulfill the requirements to carry particular metadata.

To provide identity and bootstrap encrypted communications, X.509 certificates use two cryptographic keys, mathematically related pairs consisting of public and private keys. The most common are RSA (Rivest–Shamir–Adleman) key pairs.

The private key is secret and held by the certificate’s owner, and the public key, as the names suggest, is not secret and distributed. The public key can encrypt the data; the private key can decrypt it, and vice versa. If the correct private key is not held, it is impossible to decrypt encrypted data using the public key.

SDP Security with SDP Network: Private key storage

Before we discuss the public key, let’s examine how we secure the private key. Device authentication will fail if bad actors access the private key. Once the device presents a signed certificate, one way to secure the private key is to configure access rights. However, if a compromise occurs, we are left in the undesirable world of elevated access, exposing the unprotected key.

The best way to secure and store private device keys is to use crypto processors, such as a trusted platform module (TPM). A cryptoprocessor is essentially a chip embedded in the device.

The private keys are bound to the hardware without being exposed to the system’s operating system, which is far more vulnerable to compromise than the actual hardware. TPM binds the private software key to the hard, creating robust device authentication.

SDP Security with SDP Network: Public Key Infrastructure (PKI)

How do we ensure that we have the correct public key? This is the role of the public key infrastructure (PKI). There are many types of PKI, with certificate authorities (CA) being the most popular. In cryptography, a certificate authority is an entity that issues digital certificates.

A certificate can be a pointless blank paper unless it is somehow trusted. This is done by digitally signing the certificate to endorse the validity. It is the responsibility of the certificate authorities to ensure all details of the certificate are correct before signing it. PKI is a framework that defines a set of roles and responsibilities used to distribute and validate public keys securely in an untrusted network.

For this, a PKI leverages a registration authority (RA). You may wonder what the difference between an RA and a CA is. The RA interacts with the subscribers to provide CA services. The CA subsumes the RA, which is responsible for all RA actions.

The registration authority accepts requests for digital certificates and authenticates the entity making the request. This binds the identity to the public key embedded in the certificate, cryptographically signed by the trusted 3rd party.

Not all certificate authorities are secure!

However, not all certificate authorities are bulletproof against attack. Back in 2011, DigiNotar was at the mercy of a security breach. The bad actor took complete control of all eight certificate-issuing servers, and they issued rogue certificates that had not yet been identified. It is estimated that over 300,000 users had their private data exposed by rogue certificates.

Browsers immediately blacklist DigiNotar’s certificates, but it does highlight the issues of using a 3rd party. While Public Key Infrastructure is used at large on the public internet backing X.509 certificates, it’s not recommended for zero trust SDP. At the end of the day, when you think about it, you are still using 3rd party for a pretty important task. It would be best if you were looking to implement a private PKI system for a zero-trust approach to networking and security.

If you are not looking for a fully automated process, you could implement a temporary one-time password (TOTP). This allows for human control over the signing of the certificates. Remember that much trust must be placed in whoever is responsible for this step.

SDP Closing Points:

– As businesses continue to face increasingly sophisticated cyber threats, the importance of implementing robust network security measures cannot be overstated. Software Defined Perimeter offers a comprehensive solution that addresses the limitations of traditional network architectures.

– By adopting SDP, organizations can enhance their security posture, improve network flexibility, simplify management, mitigate DDoS attacks, and meet regulatory requirements. Embracing this innovative approach to network security can safeguard sensitive data and provide peace of mind in an ever-evolving digital landscape.

– Organizations must adopt innovative security solutions to protect their valuable assets as cyber threats evolve. Software-defined perimeter offers a dynamic and user-centric approach to network security, providing enhanced protection against unauthorized access and data breaches.

– With enhanced security, granular access control, simplified network architecture, scalability, and regulatory compliance, SDP is gaining traction as a trusted security framework in today’s complex cybersecurity landscape. Embracing SDP can help organizations stay one step ahead of the ever-evolving threat landscape and safeguard their critical data and resources.

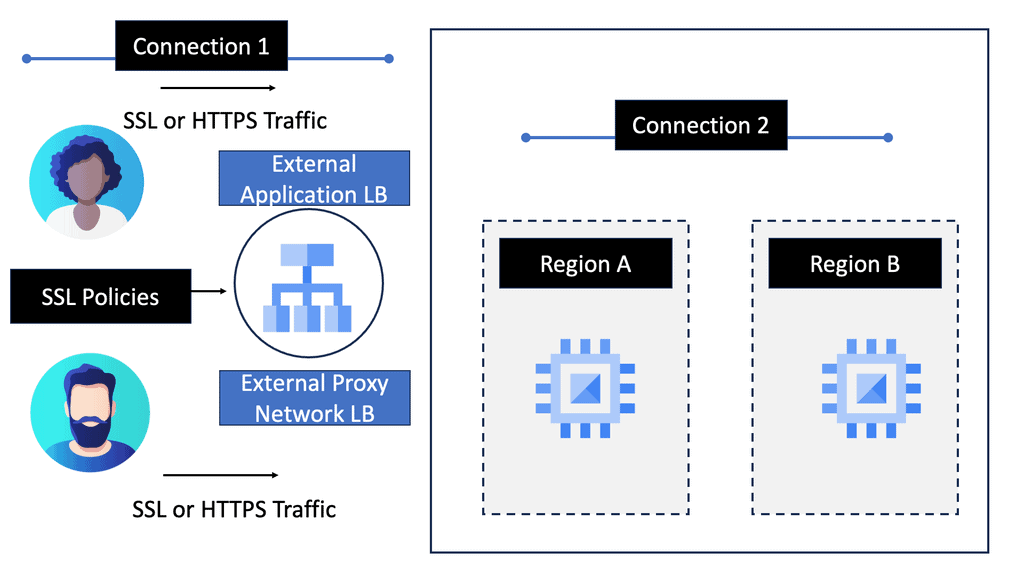

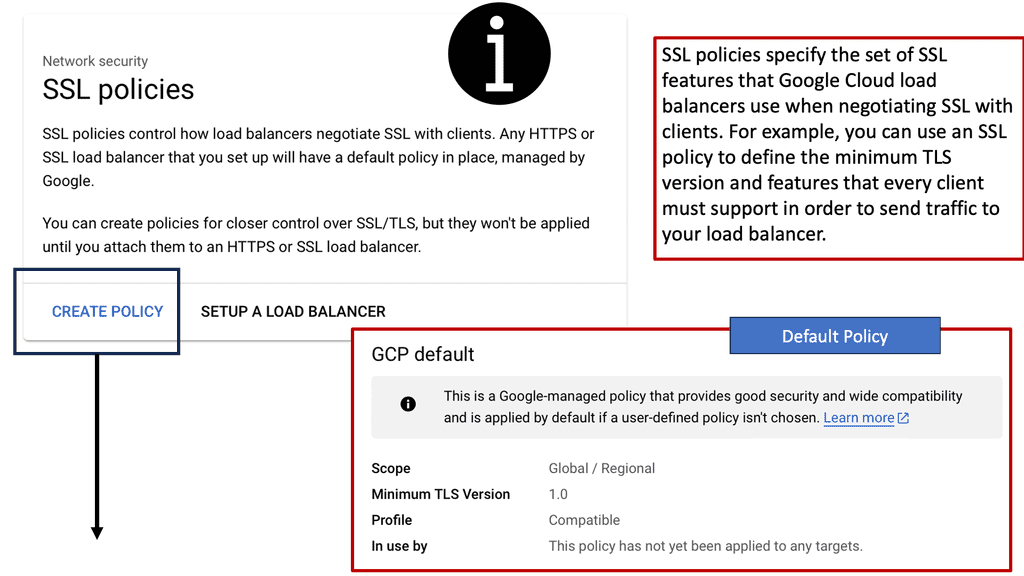

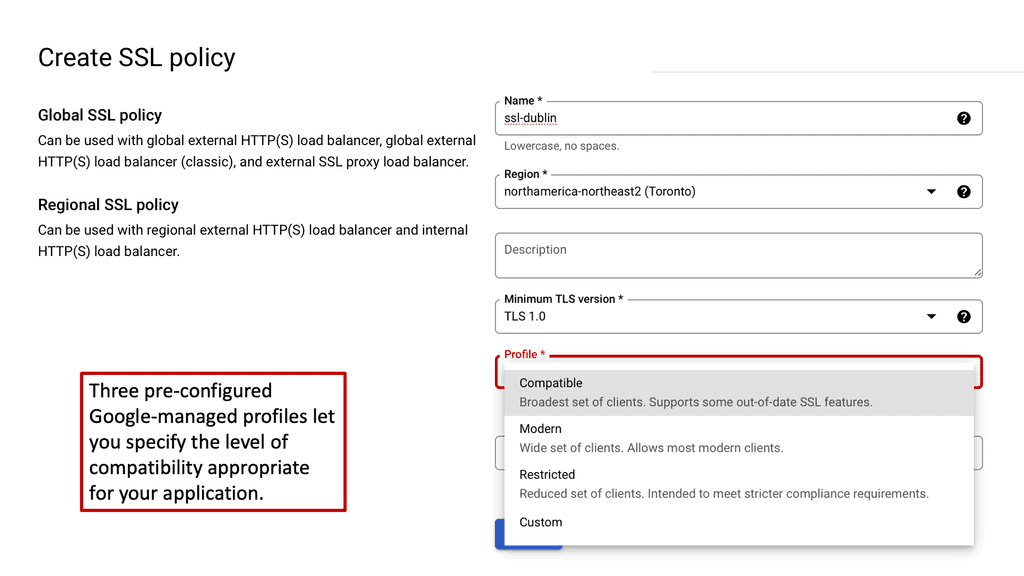

Example Technology: SSL Policies

**What Are SSL Policies?**

SSL policies are configurations that determine the security settings for SSL/TLS connections between clients and servers. These policies ensure that data is encrypted during transmission, protecting it from unauthorized access. On Google Cloud, SSL policies allow you to specify which SSL/TLS protocols and cipher suites can be used for your services. This flexibility enables you to balance security and performance based on your specific requirements.

Closing Points on SDP Network

At its core, SDP operates on a zero-trust model, where network access is granted based on user identity and device verification rather than mere IP addresses. This ensures that each connection is authenticated before any access is granted. The process begins with a secure handshake between the user’s device and the SDP controller, which verifies the user’s identity against a predefined set of policies. Once authenticated, the user is granted access to specific network resources based solely on their role, ensuring a minimal access approach. This not only enhances security but also simplifies network management.

The adoption of SDP brings numerous benefits. Firstly, it significantly reduces the attack surface by making network resources invisible to unauthorized users. This means that potential attackers cannot even see the resources, let alone access them. Secondly, SDP provides a seamless and secure experience for users, as it adapts to their needs without compromising security. Additionally, SDP is highly scalable and can be easily integrated with existing security frameworks, making it a cost-effective solution for businesses of all sizes.

While the advantages of SDP are compelling, there are challenges to consider. Implementing SDP requires an initial investment in terms of time and resources to set up the infrastructure and train personnel. Organizations must also ensure that their identity and access management (IAM) systems are robust and capable of supporting SDP’s zero-trust model. Furthermore, as with any technology, staying updated with the latest developments and threats is crucial to maintaining a secure environment.

Summary: SDP Network

In today’s rapidly evolving digital landscape, the Software-Defined Perimeter (SDP) Network concept has emerged as a game-changer. This blog post aimed to delve into the intricacies of the SDP Network, its benefits, implementation, and the potential it holds for securing modern networks.

What is the SDP Network?

SDP Network, also known as a “Black Cloud,” is a revolutionary approach to network security. It creates a dynamic and invisible perimeter around the network, allowing only authorized users and devices to access critical resources. Unlike traditional security measures, the SDP Network offers granular control, enhanced visibility, and adaptive protection.

Key Components of SDP Network

To understand the functioning of the SDP Network, it’s crucial to comprehend its key components. These include:

1. Client Devices: The devices authorized users use to connect to the network.

2. SDP Controller: The central authority managing and enforcing security policies.

3. Zero Trust Architecture: This is the foundation of the SDP Network, which assumes that no user or device can be trusted by default.

4. Identity and Access Management: This system governs user authentication and authorization, ensuring only authorized individuals gain network access.

Implementing SDP Network

Implementing an SDP Network requires careful planning and execution. The process involves several steps, including:

1. Network Assessment: Evaluating the network infrastructure and identifying potential vulnerabilities.

2. Policy Definition: Establishing comprehensive security policies that dictate user access privileges, device authentication, and resource protection.

3. SDP Deployment: Implementing the SDP solution across the network infrastructure and seamlessly integrating it with existing security measures.

4. Continuous Monitoring: Regularly monitoring and analyzing network traffic, promptly identifying and mitigating potential threats.

Benefits of SDP Network

SDP Network offers a plethora of benefits when it comes to network security. Some notable advantages include:

1. Enhanced Security: The SDP Network adopts a zero-trust approach, significantly reducing the attack surface and minimizing the risk of unauthorized access and data breaches.

2. Improved Visibility: SDP Network provides real-time visibility into network traffic, allowing security teams to identify suspicious activities and respond proactively and quickly.

3. Simplified Management: With centralized control and policy enforcement, managing network security becomes more streamlined and efficient.

4. Scalability: SDP Network can quickly adapt to the evolving needs of modern networks, making it an ideal solution for organizations of all sizes.

Conclusion:

In conclusion, the SDP Network has emerged as a transformative solution, revolutionizing network security practices. Its ability to create an invisible perimeter, enforce strict access controls, and enhance visibility offers unparalleled protection against modern threats. As organizations strive to safeguard their sensitive data and critical resources, embracing the SDP Network becomes a crucial step toward a more secure future.

- DMVPN - May 20, 2023

- Computer Networking: Building a Strong Foundation for Success - April 7, 2023

- eBOOK – SASE Capabilities - April 6, 2023

[…] capabilities if it pairs SDP and VPN technology together, as SDPs can use zero-trust models to strengthen SDP security by delineating a clear perimeter and creating secure zones within the network with […]